The European Union Synthetic Intelligence Act (EU AI Act) is the primary complete authorized framework to control the design, growth, implementation, and use of AI programs inside the European Union. The first goals of this laws are to:

- Make sure the protected and moral use of AI

- Shield elementary rights

- Foster innovation by setting clear guidelines— most significantly for high-risk AI purposes

The AI Act brings construction into the authorized panorama for corporations which are immediately or not directly counting on AI-driven options. We wish to say that this AI Act is a complete method to AI regulation internationally and can affect companies and builders far past the European Union’s borders.

On this article, we go deep into the EU AI Act: its pointers, what corporations could also be anticipated of them, and the larger implications this Act can have on the enterprise ecosystem.

About us: Viso Suite gives an all-in-one platform for corporations to carry out pc imaginative and prescient duties in a enterprise setting. From folks monitoring to stock administration, Viso Suite helps remedy challenges throughout industries. To study extra about Viso Suite’s enterprise capabilities, ebook a demo with our group of specialists.

What’s the EU AI Act? A Excessive-Degree Overview

The European Fee printed a regulatory doc in April 2021 to create a uniform legislative framework for the regulation of AI purposes amongst its member states. After greater than three years of negotiation, the regulation was printed on 12 July 2024, going into impact on 1 August 2024.

Following is a four-point abstract of this act:

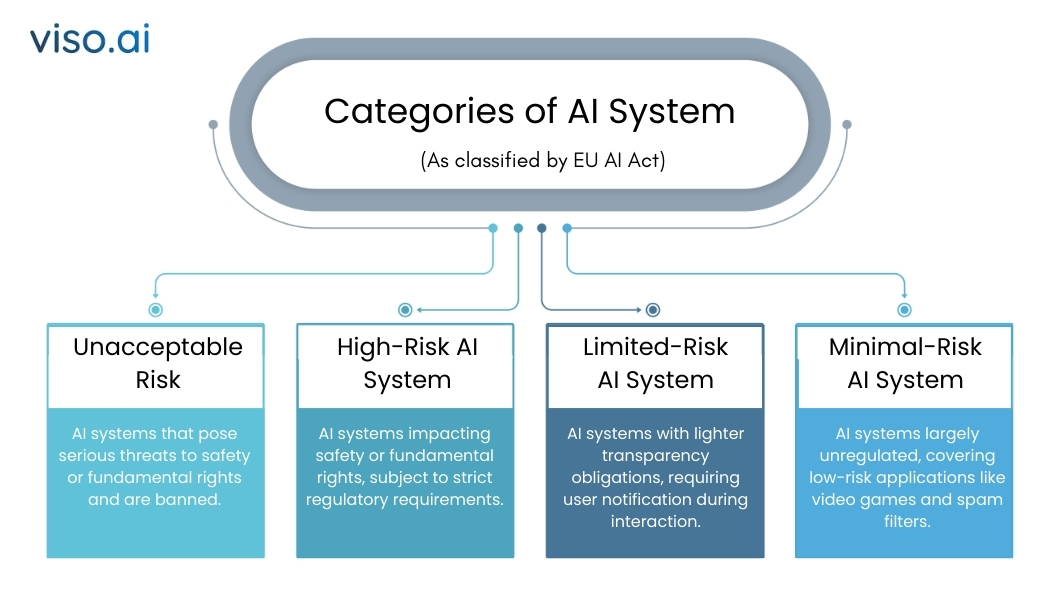

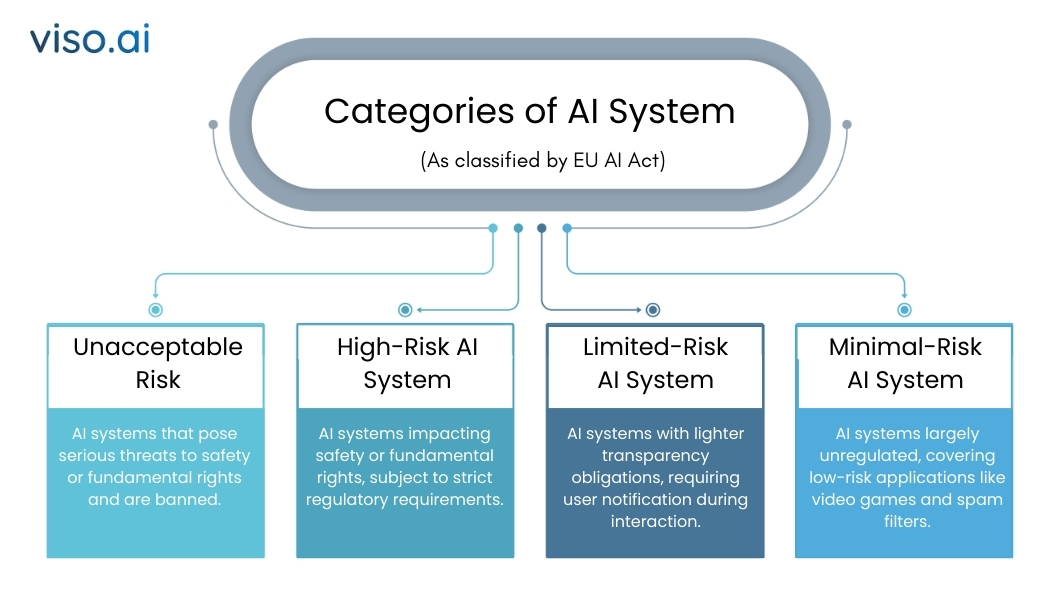

Danger-based Classification of AI Methods

The danger-based method classifies AI programs into one among 4 danger classes of danger:

Unacceptable Danger:

AI programs pose a grave hazard and injury to security and elementary rights. This might additionally embody any system making use of social scoring or manipulative AI practices.

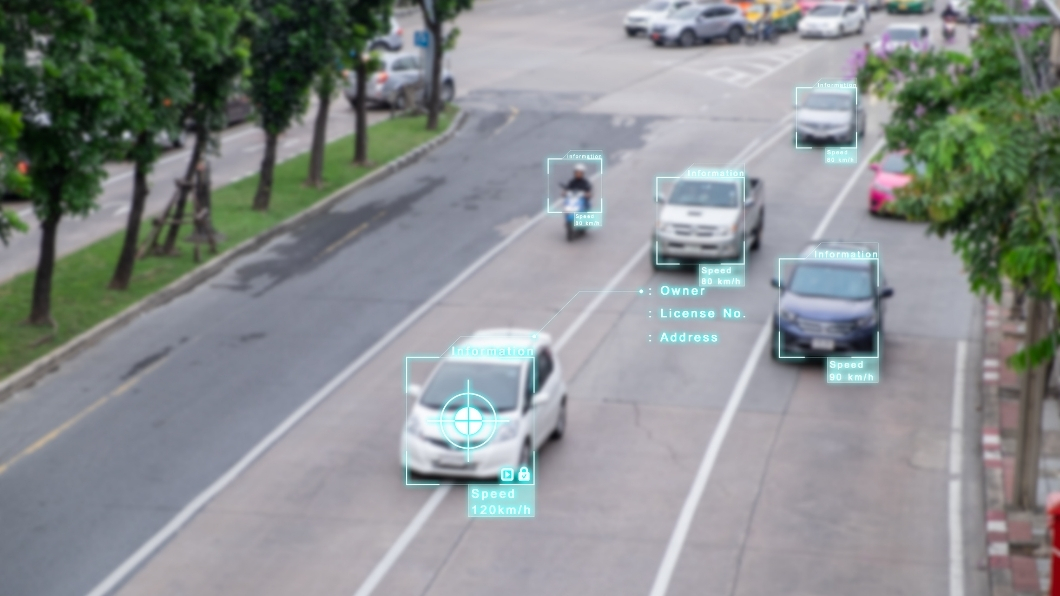

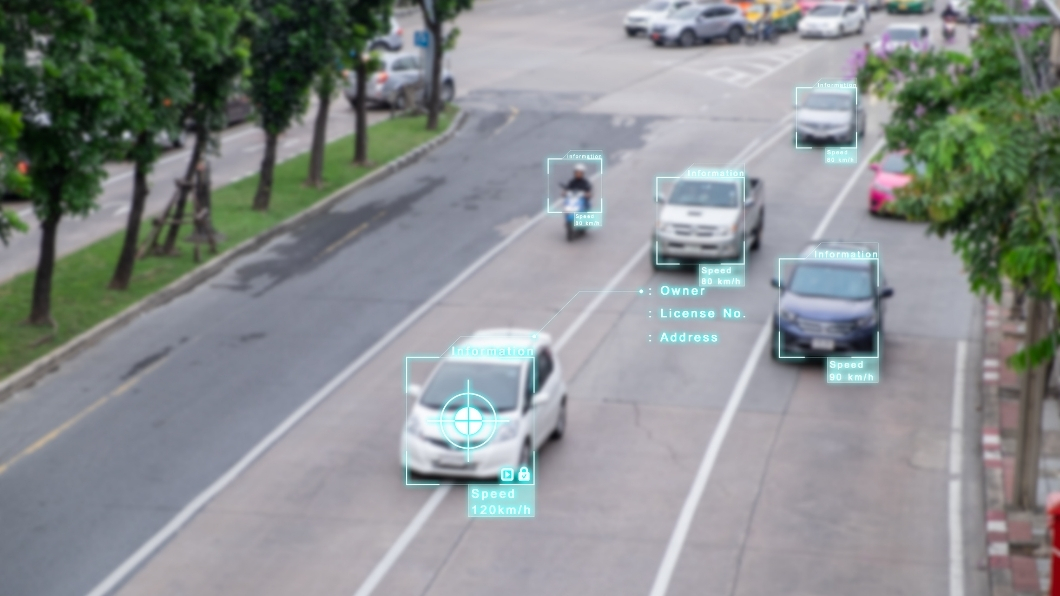

Excessive-Danger AI Methods:

This includes AI programs with a direct affect both on security or on primary rights. Examples embody these within the healthcare, regulation enforcement, and transportation sectors, together with different essential areas. These programs shall be topic to essentially the most rigorous regulatory necessities which will embody rigorous conformity assessments, obligatory human oversight, and the adoption of sturdy danger administration programs.

Restricted Danger:

Methods of restricted danger can have lighter calls for for transparency; nevertheless, builders and deployers ought to guarantee that transparency to the end-user is given relating to the presence of AI, as an illustration, chatbots and deepfakes.

Minimal Danger AI Methods:

Most of those programs presently are unregulated, equivalent to purposes like AI in video video games or spam filters. Nonetheless, as generative AI matures, potential modifications to the regulatory regime for such programs are usually not precluded.

Obligations on Suppliers of Excessive-Danger AI:

Many of the compliance burdens builders. In any occasion, whether or not inside or exterior the EU, these obligations apply to any developer that’s advertising or working high-risk AI fashions emanating inside or into the European Union states.

Conformity with these laws additional extends to high-risk AI programs supplied by third nations whose output is used inside the Union.

Consumer’s Obligations (Deployers):

Customers means any pure or authorized individuals deploying an AI system in an expert context. Builders have much less stringent obligations as in comparison with builders. They do, nevertheless, have to make sure that when deploying high-risk AI programs both within the Union or when the output of their system is used within the Union states.

All these obligations are utilized to customers primarily based each within the EU and in third nations.

Normal-Function AI (GPAI):

The builders of general-purpose AI fashions ought to present technical documentation and directions to be used and likewise observe copyright legal guidelines. Their AI Mannequin shouldn’t create a systemic danger.

Free and Open-license suppliers of GPAI would adjust to the copyright and publication of the coaching information until their AI mannequin creates a systemic danger.

No matter whether or not being licensed or not, the identical mannequin analysis, adversarial check, incident monitoring and monitoring, and cybersecurity practices must be carried out on GPAI fashions that current systemic dangers.

What Can Be Anticipated From Firms?

Organizations utilizing or growing AI applied sciences must be ready to anticipate important modifications in compliance, transparency, and operational oversight. They’ll put together for the next:

Excessive-Danger AI Management Necessities:

Firms deploying high-risk AI programs have to be answerable for strict documentation, testing, and reporting. They are going to be anticipated to undertake ongoing danger evaluation, high quality administration programs, and human oversight. We will, in flip, require correct documentation of the system’s performance, security, and compliance. Certainly, non-compliance might entice heavy fines beneath the GDPR.

Transparency Necessities:

Firms must talk this properly to customers, whether or not the AI system is evident sufficient to point to the person when he’s coping with an AI system or sufficiently unclear within the case of limited-risk AI. It is going to therefore enhance person autonomy and compliance with the precept of the EU when it comes to transparency and equity. This rule will cowl using issues like deepfakes; they must disclose if a factor is AI-generated or AI-modified.

Information Governance and AI Coaching Information:

Which means AI programs shall be educated, validated, and examined with numerous, consultant datasets, unbiased in nature. This shall require enterprise to look at extra fastidiously its sources of knowledge and transfer towards way more rigorous types of information governance in order that AI fashions yield nondiscriminatory outcomes.

Affect on Product Growth and Innovation:

The Act introduces AI builders to a larger extent of latest testing and validation procedures which will decelerate the tempo of growth. Firms that may incorporate compliance measures from an early stage of their lifecycle of AI merchandise can have key differentiators in the long term. Strict regulation might curtail the tempo of innovation in AI at first, however companies capable of modify shortly to such requirements will discover themselves well-positioned to increase confidently into the EU market.

Tips to Know About

Firms have to stick to the next key instructions to adjust to the EU Synthetic Intelligence Act:

Timeline for Enforcement

The EU AI Act proposes a phase-in enforcement schedule to provide organizations time to adapt to new necessities.

- 2 August 2024: The official implementation date of the Act.

- 2 February 2025: AI programs falling beneath the classes of “unacceptable danger” shall be banned.

- 2 Could 2025: Codes of conduct apply. These codes are pointers to AI builders on finest practices to adjust to the Act and certainly align their operations with EU rules.

- 2 August 2025: Governance guidelines relating to tasks for Normal Function AI, or GPAI, are in drive. For GPAI programs, together with giant language fashions or generative AI, there are specific calls for on transparency and security. On this respect, the calls for on such programs are usually not interfered with throughout this stage however quite given time to get ready.

- 2 August 2026: Full implementation of GPAI commitments begins.

- 2 August 2027: Necessities for high-risk AI programs will absolutely apply, and thus, corporations can have extra time to align with essentially the most demanding components of the regulation.

Danger Administration Methods

The suppliers of high-risk AI have to determine a danger administration system offering for fixed monitoring of the efficiency of AIs, periodic assessments regarding compliance points, and the instigation of fallback plans in case any improper operation or malfunction of AI programs happens.

Put up-Market Surveillance

Firms shall be required to keep up post-market monitoring packages for so long as the AI system is in use. That is to make sure ongoing compliance with the necessities outlined of their purposes. This would come with actions equivalent to suggestions solicitation, operational information evaluation, and routine auditing.

Human Oversight

The Act requires high-risk AI programs to offer for human oversight. That’s, as an illustration, people want to have the ability to intervene with, or override AI selections, the place that’s essential; as an illustration, relating to healthcare, the AI prognosis or therapy suggestion must be checked by a healthcare skilled earlier than it’s utilized.

Registration of Excessive-Danger AI Methods

Excessive-risk AI programs have to be registered within the database of the EU and permit entry to the authorities and public with related data relating to the deployment and operation of that AI system.

Third-Get together Evaluation

Third-party assessments of some AI programs may very well be wanted earlier than deployment, relying on the danger concerned. Audits, certification, and different types of analysis would verify their conformity with EU laws.

Affect on Enterprise Panorama

The introduction of the EU AI Act is anticipated to have far-reaching results on the enterprise panorama.

Equalizing the Enjoying Subject

The Act will degree the playground for companies by imposing new laws on AI over corporations of all sizes in issues of security and transparency. This might additionally result in an enormous benefit for smaller AI-driven companies.

Constructing Belief in AI

The brand new EU AI Act will little question breed extra client confidence in AI applied sciences by espousing the values of transparency and security inside its provisions. Companies that observe these laws can additional this belief as a differentiator. In flip, advertising their providers as moral and accountable AI suppliers.

Doable Compliance Prices

For some companies, and specifically smaller ones, the price of compliance may very well be insufferable. Conformity to the brand new regulatory surroundings might properly require heavy funding in compliance infrastructure, information governance, and human oversight. The fines for non-conformity might go as excessive as 7% of world revenue-a monetary danger corporations can’t afford to miss.

Elevated Accountability in Circumstances of AI Failure

Companies shall be held extra accountable when there’s a failure within the AI system or another misuse that results in injury to folks or a group. There might also be a rise within the authorized liabilities of corporations if they don’t check and monitor AI purposes appropriately.

Geopolitical Implications

The EU AI Act lastly can set a globally main instance in regulating AI. Non-EU corporations appearing within the EU market are topic to the respective guidelines. Thus, fostering cooperation and alignment internationally with questions of AI requirements. This may occasionally additionally name upon different jurisdictions, equivalent to the US, to take comparable regulatory steps.

Continuously Requested Questions

Q1. In line with the EU AI Act, that are the high-risk AI programs?

A: Excessive-risk AI programs are purposes in fields which have direct contact with a person citizen’s security, rights, and freedoms. This contains AI in essential infrastructures, like transport; in healthcare, like in prognosis; in regulation enforcement, enhanced by biometrics; in employment processes; and even in training. These shall be programs of sturdy compliance necessities, equivalent to danger evaluation, transparency, and steady monitoring.

Q2. Does each enterprise growing AI must observe the EU AI Act?

A: Not all AI programs are regulated uniformly. Usually, the Act classifies AI programs into the next classes in accordance with their potential for danger. These classes embody unacceptable danger, excessive, restricted, and minimal danger. This laws solely lays excessive ranges of compliance for high-risk AI programs, primary ranges of transparency for limited-risk programs, and minimal-risk AI programs, which embody manifestly trivial purposes equivalent to video video games and spam filters, stay largely unregulated.

Companies growing high-risk AI should comply if their AI is deployed within the EU market, whether or not they’re primarily based inside or exterior the EU.

Q3. How does the EU AI Act have an effect on corporations exterior the EU?

A: The EU Synthetic Intelligence Act AI would apply to corporations with a spot of multinational exterior the Union when their AI programs are deployed or used inside the Union. For example, if an AI system developed in a 3rd nation points outputs used inside the Union, it then would wish to adjust to the necessities beneath the EU Act. On this vein, all AI programs affecting EU residents would meet the similar regulatory bar, regardless of the place they’re constructed.

This autumn. What are the penalties for any non-compliance with the EU AI Act?

A: The EU Synthetic Intelligence AI Act punishes the occasion of non-compliance with important fines. Certainly, for extreme infringements, equivalent to makes use of of prohibited AI programs and non-compliance with obligations for high-risk AI, fines of as much as 7% of the corporate’s general worldwide annual turnover or €35 million apply.

Advisable Reads

When you get pleasure from studying this text, we now have some extra advisable reads