Deliver this venture to life

Within the Gen-AI world, now you can experiment with totally different hairstyles and create a artistic search for your self. Whether or not considering a drastic change or just searching for a recent look, the method of imagining oneself with a brand new coiffure may be each thrilling and daunting. Nonetheless, with using synthetic intelligence (AI) know-how, the panorama of hairstyling transformations is present process a groundbreaking revolution.

Think about with the ability to discover an limitless array of hairstyles, from traditional cuts to 90’s designs, all from the consolation of your individual residence. This futuristic fantasy is now a doable actuality due to AI-powered digital hairstyling platforms. By using the facility of superior algorithms and machine studying, these revolutionary platforms enable customers to digitally strive on numerous hairstyles in real-time, offering a seamless and immersive expertise in contrast to something seen earlier than.

On this article, we’ll discover HairFastGAN and perceive how AI is revolutionizing how we experiment with our hair. Whether or not you are a magnificence fanatic desperate to discover new developments or somebody considering a daring hair makeover, be a part of us on a journey via the thrilling world of AI-powered digital hairstyles.

Introduction

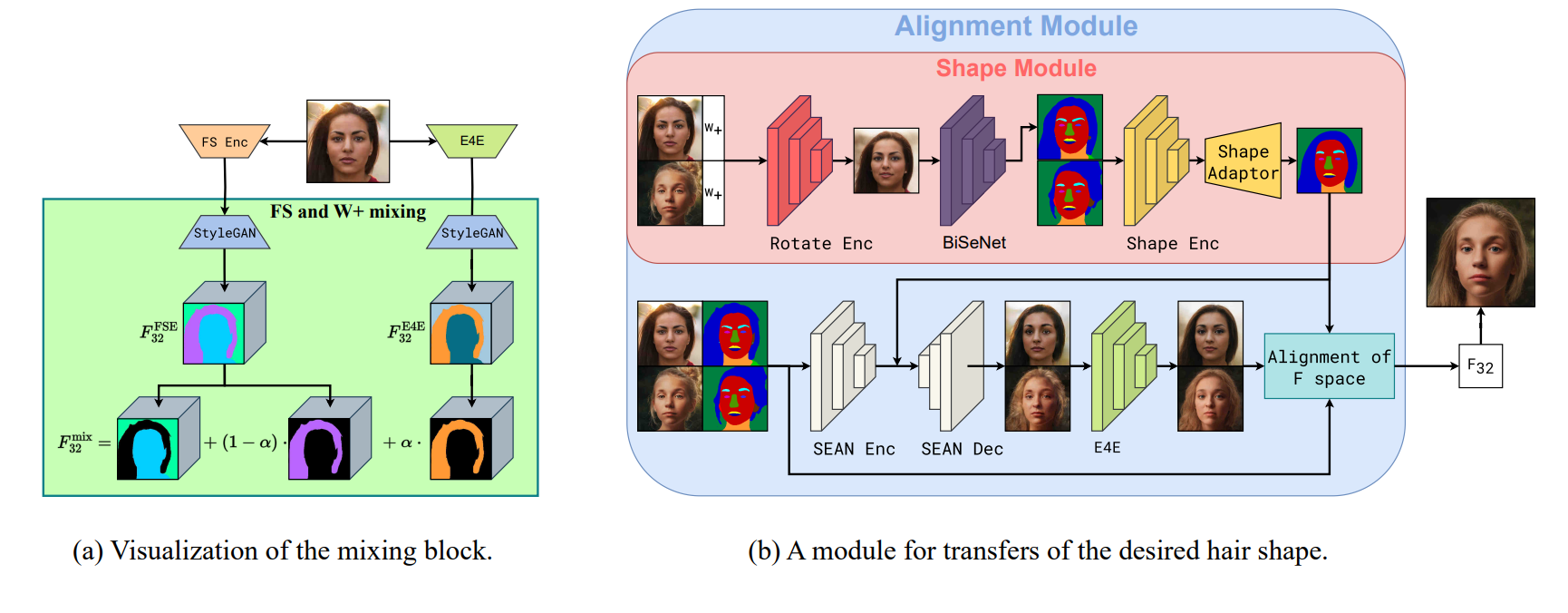

This paper introduces HairFast, a novel mannequin designed to simplify the complicated activity of transferring hairstyles from reference photographs to private pictures for digital try-on. In contrast to current strategies which are both too gradual or sacrifice high quality, HairFast excels in pace and reconstruction accuracy. By working in StyleGAN’s FS latent house and incorporating enhanced encoders and inpainting methods, HairFast efficiently achieves high-resolution leads to close to real-time, even when confronted with difficult pose variations between supply and goal photographs. This strategy outperforms current strategies, delivering spectacular realism and high quality, even when transferring coiffure form and coloration in lower than a second.

Because of developments in Generative Adversarial Networks (GANs), we will now use them for semantic face modifying, which incorporates altering hairstyles. Coiffure switch is a very tough and interesting facet of this subject. Primarily, it includes taking traits like hair coloration, form, and texture from one picture and making use of them to a different whereas holding the individual’s identification and background intact. Understanding how these attributes work collectively is essential for getting good outcomes. This sort of modifying has many sensible makes use of, whether or not you are knowledgeable working with picture modifying software program or simply somebody enjoying digital actuality or laptop video games.

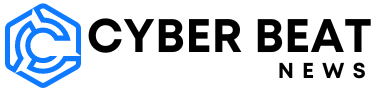

The HairFast technique is a quick and high-quality resolution for altering hairstyles in pictures. It could possibly deal with high-resolution photographs and produces outcomes akin to the perfect current strategies. It is also fast sufficient for interactive use, due to its environment friendly use of encoders. This technique works in 4 steps: embedding, alignment, mixing, and post-processing. Every step is dealt with by a particular encoder educated to do this particular job.

Current developments in Generative Adversarial Networks (GANs), like ProgressiveGAN, StyleGAN, and StyleGAN2, have enormously improved picture era, significantly in creating extremely lifelike human faces. Nonetheless, reaching high-quality, absolutely managed hair modifying stays a problem resulting from numerous complexities.

Completely different strategies handle this problem in several methods. Some concentrate on balancing editability and reconstruction constancy via latent house embedding methods, whereas others, like Barbershop, decompose the hair switch activity into embedding, alignment, and mixing subtasks.

Approaches like StyleYourHair and StyleGANSalon goals for larger realism by incorporating native type matching and pose alignment losses. In the meantime, HairNet and HairCLIPv2 deal with complicated poses and numerous enter codecs.

Encoder-based strategies, comparable to MichiGAN and HairFIT, pace up runtime by coaching neural networks as a substitute of utilizing optimization processes. CtrlHair, a standout mannequin, makes use of encoders to switch coloration and texture, however nonetheless faces challenges with complicated facial poses, resulting in gradual efficiency resulting from inefficient postprocessing.

General, whereas important progress has been made in hair modifying utilizing GANs, there are nonetheless hurdles to beat for reaching seamless and environment friendly leads to numerous eventualities.

Methodology Overview

This novel technique for transferring hairstyles is similar to the Barbershop strategy nevertheless—all optimization processes are changed with educated encoders for higher effectivity. Within the Embedding module, authentic photographs illustration are captured in StyleGAN areas, like W+ for modifying and F S house for detailed reconstruction. Moreover, face segmentation masks are used for later use.

Shifting to the Alignment module, the form of the coiffure from one picture to a different is principally performed by specializing in altering the tensor F. Right here, two duties are accomplished: producing the specified coiffure form by way of the Form Module and adjusting the F tensor for inpainting post-shape change.

Within the Mixing module, the shift of hair coloration from one picture to a different is completed . By modifying the S house of the supply picture utilizing the educated encoder, that is achieved whereas contemplating extra embeddings from the supply photographs.

Though the picture post-blending might be thought-about closing, a brand new Submit-Processing module is required. This step goals to revive any misplaced particulars from the unique picture, guaranteeing facial identification preservation and technique realism enhancement.

Embedding

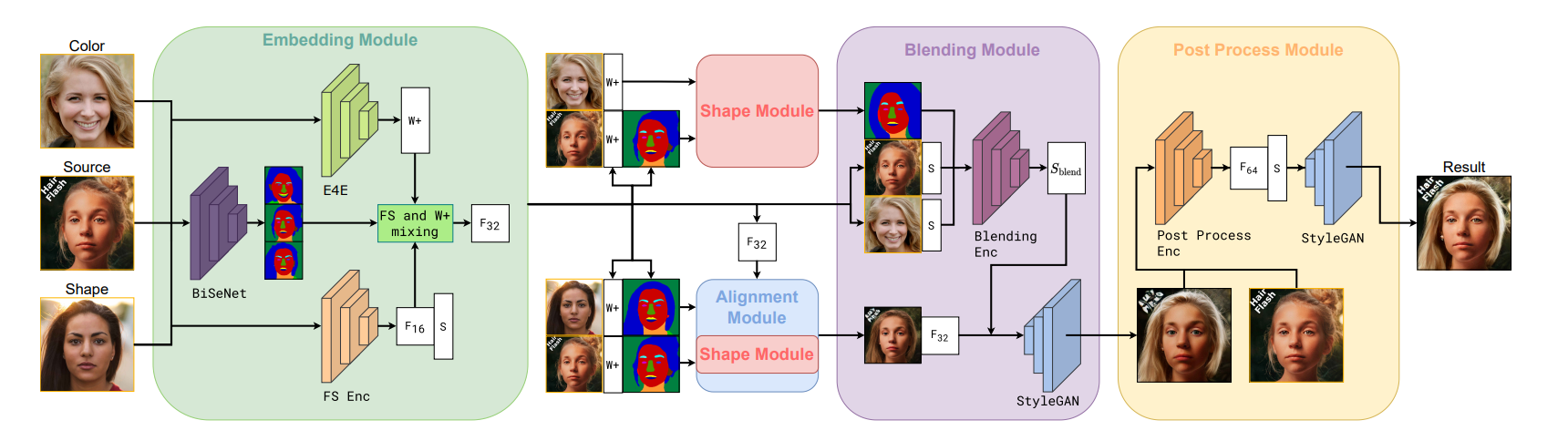

To begin altering a coiffure, first photographs are transformed into StyleGAN house. Strategies like Barbershop and StyleYourHair do that by reconstructing every picture in F S house via an optimization course of. As a substitute, on this analysis a pre-trained FS encoder is used that rapidly offers the F S representations of photographs. It is probably the greatest encoders on the market and makes photographs look actually good.

However this is the problem: F S house is not straightforward to work with. When altering hair coloration utilizing the FS encoder in Barbershop, it would not do a terrific job. So, one other encoder known as E4E is used. It is easy and never pretty much as good at making photographs look good, but it surely’s nice for making modifications. Subsequent, the F tensor (which holds the details about the hair) from each encoders is blended to unravel this drawback.

Alignment

On this step, the hair makeover is completed, so the hair in a single image ought to appear to be the hair in one other image. To do that, a masks is created that outlines the hair, after which the hair within the first image is adjusted to match that masks.

Some sensible of us got here up with a approach to do that known as CtrlHair. They use a Form Encoder to know the shapes of hair and faces in footage and a Form Adaptor to regulate the hair in a single image to match the form of one other. This technique often works fairly properly, but it surely has some points.

One huge drawback is that the Form Adaptor is educated to deal with hair and faces in comparable poses. So if the poses are actually totally different between the 2 footage, it may mess issues up, making the hair look bizarre. The CtrlHair crew tried to repair this by tweaking the masks afterwards, but it surely’s not probably the most environment friendly resolution.

To sort out this concern, a further instrument known as Rotate Encoder was developed. It is educated to regulate the form picture to match the pose of the supply picture. That is primarily performed by tweaking the illustration of the picture earlier than segmenting it. There is no such thing as a must fine-tune the small print for creating the masks, so a simplified illustration is used on this case. This encoder is educated to deal with complicated poses with out distorting the hair. If the poses already match, it will not mess up the hairstyles.

Mixing

Within the subsequent step, the primary focus is on altering the hair coloration to the specified shade. Beforehand, as we all know Barbershop’s earlier technique that was too inflexible, looking for a steadiness between the supply and desired coloration vectors. This usually resulted in incomplete edits and added undesirable artifacts resulting from outdated optimization methods.

To enhance this, an analogous encoder structure known as HairCLIP is added predicts how the type of the hair vector modifications when given two enter vectors. This technique makes use of particular modulation layers which are extra steady and nice for altering types.

Moreover, we’re feeding our mannequin with CLIP embeddings of each the supply picture (together with hair) and the hair-only a part of the colour picture. This additional data helps protect particulars that may get misplaced through the embedding course of and has been proven to considerably improve the ultimate end result, in accordance with our experiments.

Experiments Outcomes

The experiments revealed that whereas the CtrlHair technique scored the perfect in accordance with the FID metric, it really did not carry out as properly visually in comparison with different state-of-the-art approaches. This discrepancy happens resulting from its post-processing approach, which concerned mixing the unique picture with the ultimate end result utilizing Poisson mixing. Whereas this strategy was favored by the FID metric, it usually resulted in noticeable mixing artifacts. However, the HairFast technique had a greater mixing step however struggled with instances the place there have been important modifications in facial hues. This made it difficult to make use of Poisson mixing successfully, because it tended to emphasise variations in shades, resulting in decrease scores on high quality metrics.

A novel post-processing module has been developed on this analysis, which is sort of a supercharged instrument for fixing photographs. It is designed to deal with extra complicated duties, like rebuilding the unique face and background, fixing up hair after mixing, and filling in any lacking elements. This module creates a very detailed picture, with 4 instances extra element than what we used earlier than. In contrast to different instruments that target modifying photographs, ours prioritizes making the picture look pretty much as good as doable with no need additional edits.

Demo

Deliver this venture to life

To run this demo we’ll first, open the pocket book HairFastGAN.ipynb. This pocket book has all of the code we want try to experiment with the mannequin. To run the demo, we first must clone the repo and set up the mandatory libraries nevertheless.

- Clone the repo and set up Ninja

!wget https://github.com/ninja-build/ninja/releases/obtain/v1.8.2/ninja-linux.zip

!sudo unzip ninja-linux.zip -d /usr/native/bin/

!sudo update-alternatives --install /usr/bin/ninja ninja /usr/native/bin/ninja 1 --force

## clone repo

!git clone https://github.com/AIRI-Institute/HairFastGAN

%cd HairFastGAN- Set up some essential packages and the pre-trained fashions

from concurrent.futures import ProcessPoolExecutor

def install_packages():

!pip set up pillow==10.0.0 face_alignment dill==0.2.7.1 addict fpie

git+https://github.com/openai/CLIP.git -q

def download_models():

!git clone https://huggingface.co/AIRI-Institute/HairFastGAN

!cd HairFastGAN && git lfs pull && cd ..

!mv HairFastGAN/pretrained_models pretrained_models

!mv HairFastGAN/enter enter

!rm -rf HairFastGAN

with ProcessPoolExecutor() as executor:

executor.submit(install_packages)

executor.submit(download_models)- Subsequent, we’ll arrange an argument parser, which can create an occasion of the

HairFastclass, and carry out hair swapping operation, utilizing default configuration or parameters.

import argparse

from pathlib import Path

from hair_swap import HairFast, get_parser

model_args = get_parser()

hair_fast = HairFast(model_args.parse_args([]))- Use the under script which comprises the features for downloading, changing, and displaying photographs, with assist for caching and numerous enter codecs.

import requests

from io import BytesIO

from PIL import Picture

from functools import cache

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import torchvision.transforms as T

import torch

%matplotlib inline

def to_tuple(func):

def wrapper(arg):

if isinstance(arg, checklist):

arg = tuple(arg)

return func(arg)

return wrapper

@to_tuple

@cache

def download_and_convert_to_pil(urls):

pil_images = []

for url in urls:

response = requests.get(url, allow_redirects=True, headers={"Person-Agent": "Mozilla/5.0"})

img = Picture.open(BytesIO(response.content material))

pil_images.append(img)

print(f"Downloaded a picture of dimension {img.dimension}")

return pil_images

def display_images(photographs=None, **kwargs):

is_titles = photographs is None

photographs = photographs or kwargs

grid = gridspec.GridSpec(1, len(photographs))

fig = plt.determine(figsize=(20, 10))

for i, merchandise in enumerate(photographs.gadgets() if is_titles else photographs):

title, img = merchandise if is_titles else (None, merchandise)

img = T.purposeful.to_pil_image(img) if isinstance(img, torch.Tensor) else img

img = Picture.open(img) if isinstance(img, str | Path) else img

ax = fig.add_subplot(1, len(photographs), i+1)

ax.imshow(img)

if title:

ax.set_title(title, fontsize=20)

ax.axis('off')

plt.present()- Strive the hair swap with the downloaded picture

input_dir = Path('/HairFastGAN/enter')

face_path = input_dir / '6.png'

shape_path = input_dir / '7.png'

color_path = input_dir / '8.png'

final_image = hair_fast.swap(face_path, shape_path, color_path)

T.purposeful.to_pil_image(final_image).resize((512, 512)) # 1024 -> 512

Ending Ideas

In our article, we launched the HairFast technique for transferring hair, which stands out for its skill to ship high-quality, high-resolution outcomes akin to optimization-based strategies whereas working at almost real-time speeds.

Nonetheless, like many different strategies, this technique can also be constrained by the restricted methods to switch hairstyles. But, the structure lays the groundwork for addressing this limitation in future work.

Moreover, the way forward for digital hair styling utilizing AI holds immense promise for revolutionizing the best way we work together with and discover hairstyles. With developments in AI applied sciences, much more lifelike and customizable digital hair makeover instruments are anticipated. Therefore, this results in extremely personalised digital styling experiences.

Furthermore, because the analysis on this subject continues to enhance, we will anticipate to see larger integration of digital hair styling instruments throughout numerous platforms, from cell apps to digital actuality environments. This widespread accessibility will empower customers to experiment with totally different appears to be like and developments from the consolation of their very own gadgets.

General, the way forward for digital hair styling utilizing AI holds the potential to redefine magnificence requirements, empower people to precise themselves creatively and rework the best way we understand and interact with hairstyling.

We loved experimenting with HairFastGAN’s novel strategy, and we actually hope you loved studying the article and attempting it with Paperspace.

Thank You!