In lots of Synthetic Intelligence (AI) purposes comparable to Pure Language Processing (NLP) and Laptop Imaginative and prescient (CV), there’s a want for a unified pre-training framework (e.g. Florence-2) that may operate autonomously. The present datasets for specialised purposes nonetheless want human labeling, which limits the event of foundational fashions for advanced CV-related duties.

Microsoft researchers created the Florence-2 mannequin (2023) that’s able to dealing with many pc imaginative and prescient duties. It efficiently solves the dearth of a unified mannequin structure and weak coaching information.

About us: Viso.ai gives the end-to-end Laptop Imaginative and prescient Infrastructure, Viso Suite. It’s a robust all-in-one answer for AI imaginative and prescient. Corporations worldwide use it to develop and ship real-world purposes dramatically sooner. Get a demo on your firm.

Historical past of Florence-2 mannequin

In a nutshell, basis fashions are fashions which are pre-trained on some common duties (usually in self-supervised mode). In any other case, it’s not possible to search out quite a lot of labeled information for totally supervised studying. They are often simply tailored to numerous new duties (with or with out fine-tuning/extra coaching), inside context studying.

Researchers launched the time period ‘basis’ as a result of they’re the foundations for a lot of different issues/challenges. There are benefits to this course of (it’s simple to construct one thing new) and drawbacks (many will undergo from a foul basis).

These fashions should not elementary for AI since they aren’t a foundation for understanding or constructing intelligence or consciousness. To use basis fashions in CV duties, Microsoft researchers divided the vary of duties into three teams:

- House (scene classification, object detection)

- Time (statics, dynamics)

- Modality (RGB, depth).

Then they outlined the inspiration mannequin for CV as a pre-trained mannequin and adapters for fixing all issues on this House-Time-Modality with the power to switch the zero studying kind.

They introduced their work as a brand new paradigm for constructing a imaginative and prescient basis mannequin and known as it Florence-2 (the birthplace of the Renaissance). They think about it an ecosystem of 4 giant areas:

- Information gathering

- Mannequin pre-training

- Activity variations

- Coaching infrastructure

What’s the Florence-2 mannequin?

Xiao et al. (Microsoft, 2023) developed the Florence-2 in keeping with NLP goals of versatile mannequin growth with a typical base. Florence-2 combines a multi-sequence studying paradigm and customary imaginative and prescient language modeling for quite a lot of CV duties.

Florence-2 redefines efficiency requirements with its distinctive zero-shot and fine-tuning capabilities. It performs duties like captioning, expression interpretation, visible grounding, and object detection. Moreover, Florence-2 surpasses present specialised fashions and units new benchmarks utilizing publicly obtainable human-annotated information.

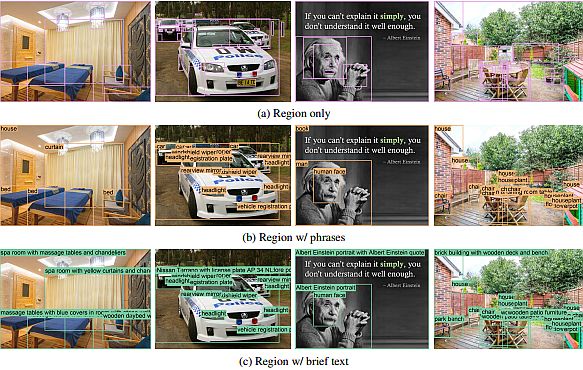

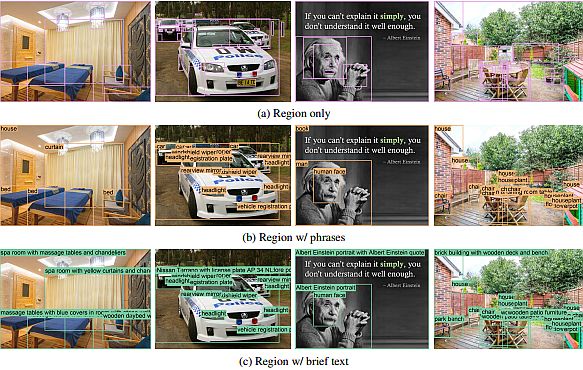

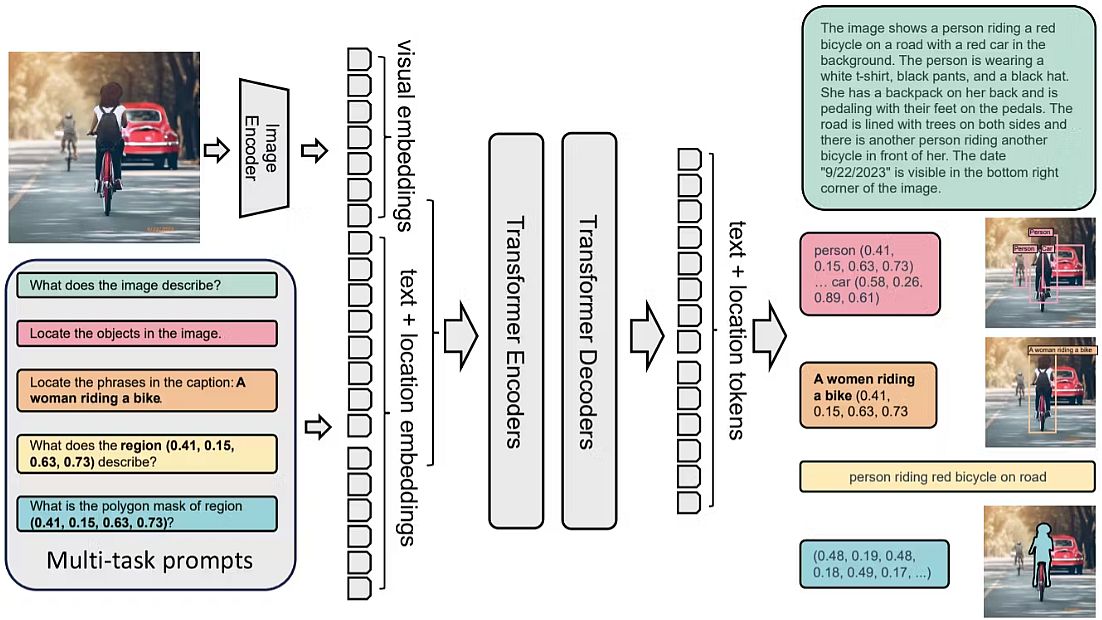

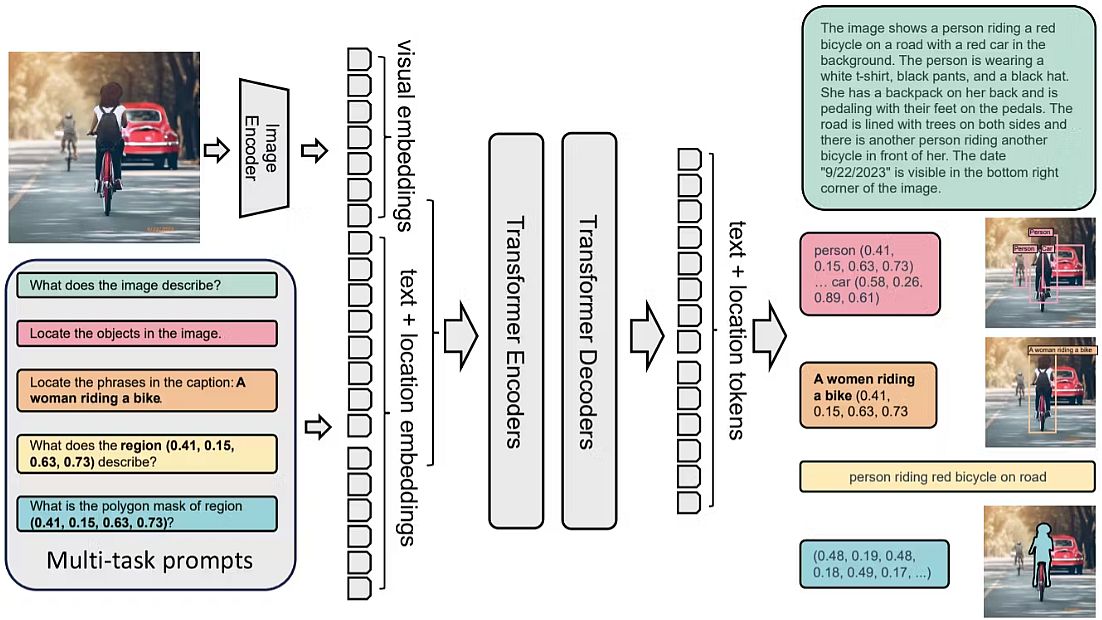

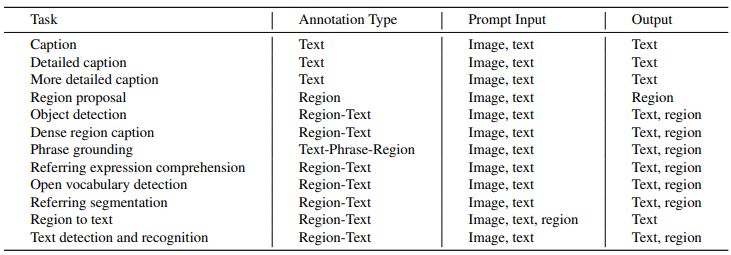

Florence-2 makes use of a multi-sequence structure to resolve numerous pc imaginative and prescient duties. Each activity is dealt with as a transiting downside, by which the mannequin creates the suitable output reply given an enter picture and a task-specific immediate.

Duties can comprise geographical or textual content information, and the mannequin adjusts its processing based on the duty’s necessities. Researchers included location tokens within the tokenizer’s vocabulary checklist for duties particular to a given area. These tokens present a number of codecs, together with field, quad, and polygon illustration.

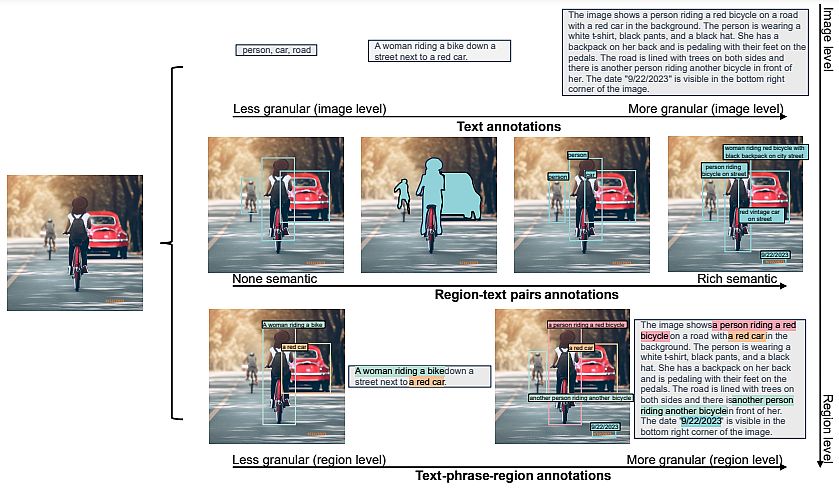

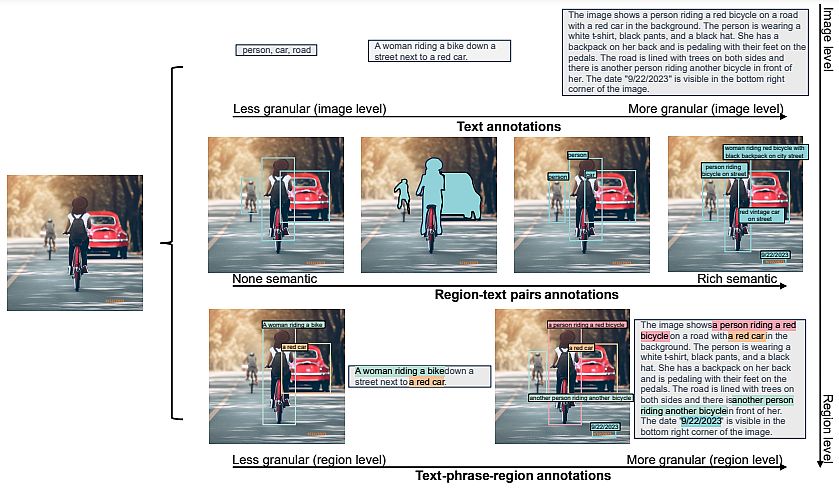

- Understanding pictures, and language descriptions that seize high-level semantics and facilitate an intensive comprehension of visuals. Exemplar duties embody picture classification, captioning, and visible query answering.

- Area recognition duties, enabling object recognition and entity localization inside pictures. They seize relationships between objects and their spatial context. As an illustration, object detection, occasion segmentation, and referring expression are such duties.

- Granular visual-semantic duties require a granular understanding of each textual content and picture. They contain finding the picture areas that correspond to the textual content phrases, comparable to objects, attributes, or relations.

Florence-2 Structure and Information Engine

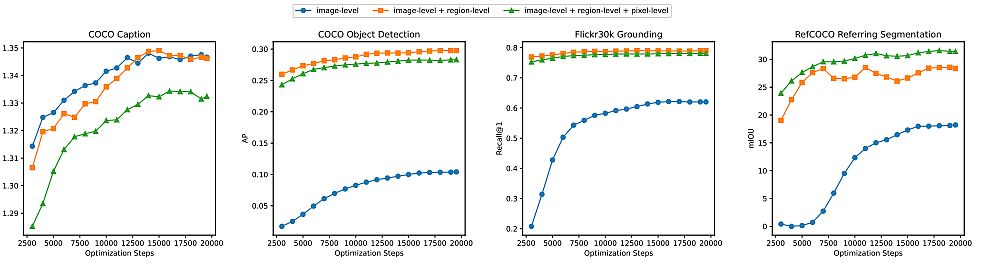

Being a common illustration mannequin, Florence-2 can resolve completely different CV duties with a single set of weights and a unified illustration structure. Because the determine beneath exhibits, Florence-2 applies a multi-sequence studying algorithm, unifying all duties beneath a typical CV modeling aim.

The one mannequin takes pictures coupled with activity prompts as directions and generates the fascinating labels in textual content kinds. It makes use of a imaginative and prescient encoder to transform pictures into visible token info. To generate the response, the tokens are paired with textual content info and processed by a transformer-based en/de-coder.

Microsoft researchers formulated every activity as a translation downside: given an enter picture and a task-specific immediate, they created the correct output response. Relying on the duty, the immediate and response could be both textual content or area.

- Textual content: When the immediate or reply is apparent textual content with out particular formatting, they maintained it of their ultimate multi-sequence format.

- Area: For region-specific duties, they added location tokens to the token’s vocabulary checklist, representing numerical coordinates. They created 1000 bins and represented areas utilizing codecs appropriate for the duty necessities.

Information Engine in Florence-2

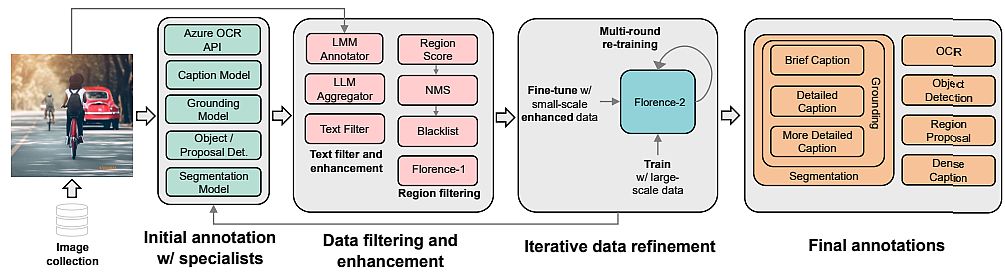

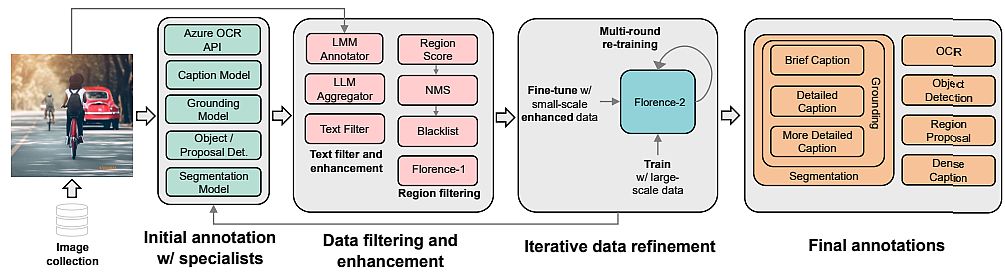

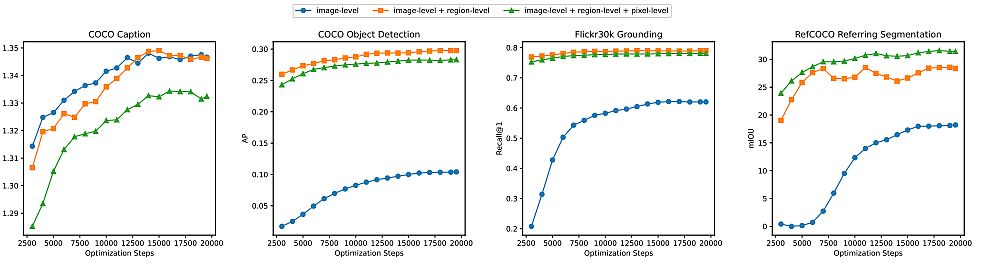

To coach their Florence-2 structure, researchers utilized a unified, large-volume, multitask dataset containing completely different picture information elements. Due to the dearth of such information, they’ve developed a brand new multitask picture dataset.

Technical Challenges within the Mannequin Improvement

There are difficulties with picture descriptions as a result of completely different pictures find yourself beneath one description, and in FLD-900M for 350 M descriptions, there’s multiple picture.

This impacts the extent of the coaching process. In commonplace descriptive studying, it’s assumed that every image-text pair has a singular description, and all different descriptions are thought-about destructive examples.

The researchers used unified image-text contrastive studying (UniCL, 2022). This Contrastive Studying is unified within the sense that in a typical image-description-label house it combines two studying paradigms:

- Discriminative (mapping a picture to a label, supervised studying) and

- Pre-training in an image-text (mapping an outline to a singular label, contrastive studying).

The structure has a picture encoder and a textual content encoder. The characteristic vectors from the encoders’ outputs are normalized and fed right into a bidirectional goal operate. Moreover, one element is chargeable for supervised image-to-text contrastive loss, and the second is in the other way for supervised text-to-image contrastive loss.

The fashions themselves are a regular 12-layer textual content transformer for textual content (256 M parameters) and a hierarchical Imaginative and prescient Transformer for pictures. It’s a particular modification of the Swin Transformer with convolutional embeddings like CvT, (635 M parameters).

In complete, the mannequin has 893 M parameters. They skilled for 10 days on 512 machines A100-40Gb. After pre-training, they skilled Florence-2 with a number of forms of adapters.

Experiments and Outcomes

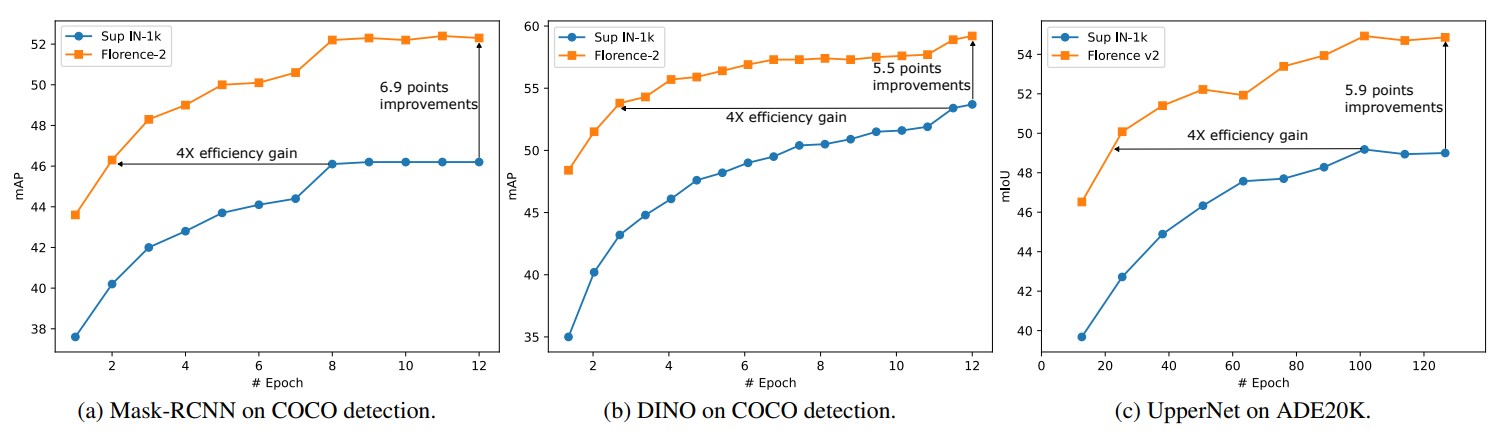

Researchers skilled Florence-2 on finer-grained representations by means of detection. To do that, they added the dynamic head adapter, which is a specialised consideration mechanism for the pinnacle that does detection. They did recognition with the tensor options, by stage, place, and channel.

They skilled on the FLOD-9M dataset (Florence Object detection Dataset), into which a number of present ones have been merged, together with COCO, LVIS, and OpenImages. Furthermore, they generated pseudo-bounding containers. In complete, there have been 8.9M pictures, 25190 object classes, and 33.4M bounding containers.

This was skilled on image-text matching (ITM) loss and the basic Roberto MLM loss. Then in addition they fine-tuned it for the VQA activity and one other adapter for video recognition, the place they took the CoSwin picture encoder and changed 2D layers with 3D ones, convolutions, merge operators, and many others.

Throughout initialization, they duplicated the pre-trained weights from 2D into new ones. There was some extra coaching right here the place fine-tuning for the duty was instantly accomplished.

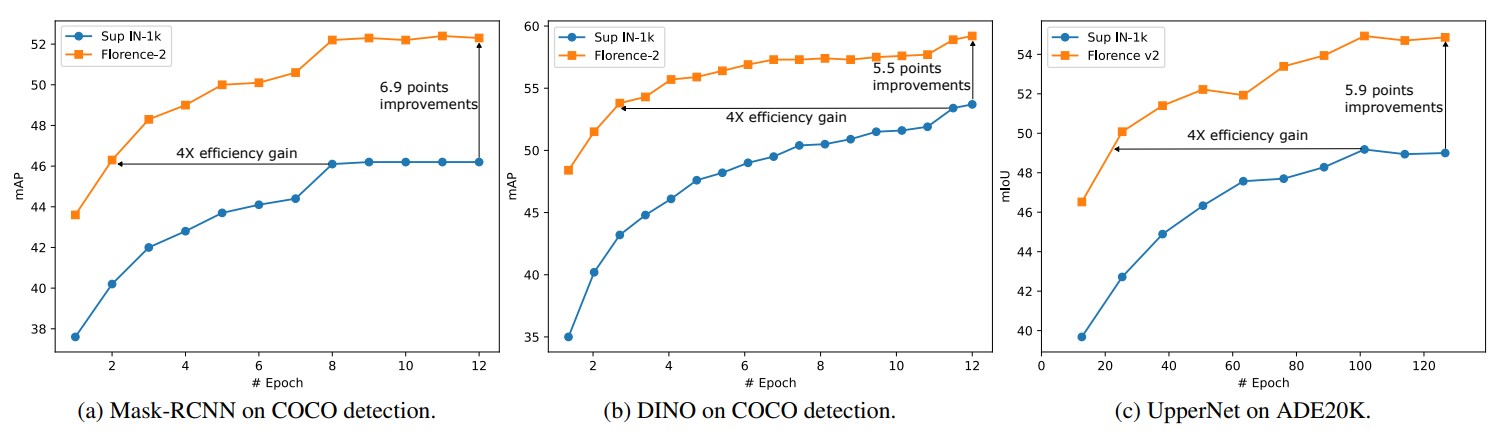

In fine-tuning Florence-2 beneath ImageNet, it’s barely worse than SoTA, but additionally 3 times smaller. For a number of photographs of cross-domain classification, it beat the benchmark chief, though the latter used ensemble and different methods.

For image-text retrieval in zero-shot, it matches or surpasses earlier outcomes, and in fine-tuning, it beats with a considerably smaller variety of epochs. It beats in object detection, VQA, and video motion recognition too.

Purposes of Florence-2 in Numerous Industries

Mixed text-region-image annotation could be useful in a number of industries and right here we enlist its potential purposes:

Medical Imaging

Medical practitioners use imaging with MRI, X-rays, and CT scans to detect anatomical options and anomalies. Then they apply text-image annotation to categorise and annotate medical pictures. This aids within the extra exact and efficient prognosis and therapy of sufferers.

Florence-2 with its text-image annotation can acknowledge patterns and find fractures, tumors, abscesses, and quite a lot of different circumstances. Mixed annotation has the potential to cut back affected person wait occasions, unencumber pricey scanner slots, and improve the accuracy of diagnoses.

Transport

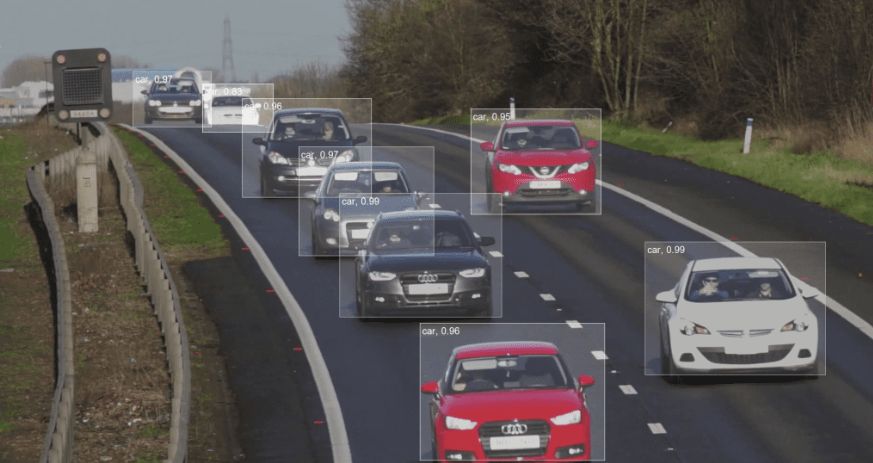

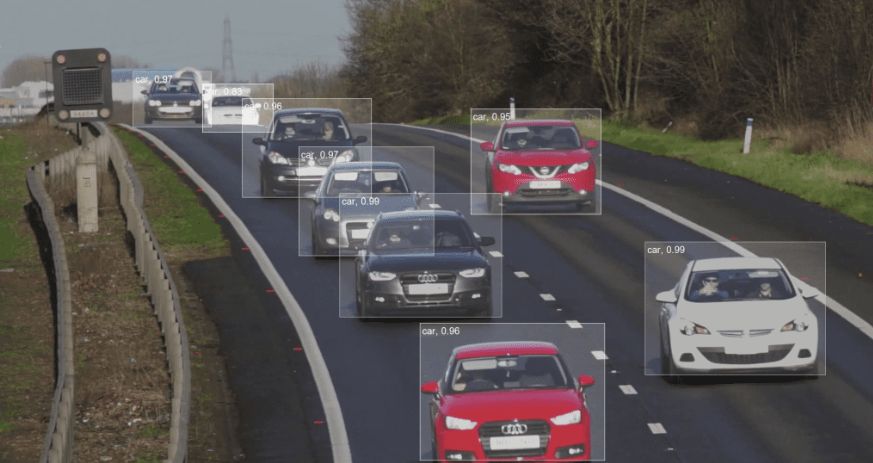

Textual content-image annotation is essential within the growth of visitors and transport methods. With the assistance of Florence-2 annotation, autonomous vehicles can acknowledge and interpret their environment, enabling them to make right choices.

Annotation helps to differentiate several types of roads, comparable to metropolis streets and highways, and to determine gadgets (pedestrians, visitors indicators, and different vehicles). Figuring out object borders, places, and orientations, in addition to tagging autos, folks, visitors indicators, and street markings, are essential duties.

Agriculture

Precision agriculture is a comparatively new subject that mixes conventional farming strategies with know-how to extend manufacturing, profitability, and sustainability. It makes use of robotics, drones, GPS sensors, and autonomous autos to hurry up fully guide farming operations.

Textual content-image annotation is utilized in many duties, together with bettering soil circumstances, forecasting agricultural yields, and assessing plant well being. Florence-2 can play a big position in these processes by enabling CV algorithms to acknowledge specific indicators like human farmers.

Safety and Surveillance

Textual content-image annotation makes use of 2D/3D bounding containers to determine people or objects from the group. Florence-2 exactly labels the folks or gadgets by drawing a field round them. By observing human behaviors and placing them in distinct boundary containers, it could detect crimes.

The cameras along with labeled prepare datasets are able to recognizing faces. Cameras determine folks along with car sorts, colours, weapons, instruments, and different equipment, which Florence-2 will annotate.

What’s subsequent for Florence-2?

Florence-2 units the stage for the event of pc imaginative and prescient fashions sooner or later. It exhibits an infinite potential for multitask studying and the mixing of textual and visible info, making it an progressive CV mannequin. Due to this fact, it gives a productive answer for a variety of purposes with out requiring quite a lot of fine-tuning.

The mannequin is able to dealing with duties starting from granular semantic changes to picture understanding. By showcasing the effectivity of a number of sequence studying, Florence-2’s structure raises the usual for full illustration studying.

Florence-2’s performances present alternatives for researchers to go farther into the fields of multi-task studying and cross-modal recognition as we observe the quickly altering AI panorama.

Examine different CV fashions right here: