Convolution Neural Networks (CNNs) are highly effective instruments that may course of any knowledge that appears like a picture (matrices) and discover necessary data from it, nevertheless, in commonplace CNNs, each channel is given the identical significance. That is what Squeeze and Excite Community improves, it dynamically provides significance to sure channels solely (an consideration mechanism for channel correlation).

Normal CNNs summary and extract options of a picture with preliminary layers studying about edges and texture and last layers extracting shapes of objects, carried out by convolving learnable filters or kernels, nevertheless not all convolution filters are equally necessary for any given activity, and in consequence, plenty of computation and efficiency is misplaced as a consequence of this.

For instance, in a picture containing a cat, some channels would possibly seize particulars like fur texture, whereas others would possibly concentrate on the general form of the cat, which will be just like different animals. Hypothetically, to carry out higher, the community might reap higher outcomes if it prioritizes channels containing fur texture.

On this weblog, we’ll look in-depth at how Squeeze and Excitation blocks enable dynamic weighting of channel significance and create adaptive correlations between them. For conciseness, we’ll consult with Squeeze and Excite Networks as “SE

Introduction to Squeeze and Excite Networks

Squeeze and Excite Community are particular blocks that may be added to any preexisting deep studying structure corresponding to VGG-16 or ResNet-50. When added to a Community, SE Community dynamically adapts and recalibrates the significance of a channel.

Within the authentic analysis paper revealed, the authors present {that a} ResNet-50 when mixed with SENet (3.87 GFLOPs) achieves accuracy that’s equal to what the unique ResNet-101 (7.60GFLOPs) achieves. This implies half of the computation is required with the SENet built-in mannequin, which is sort of spectacular.

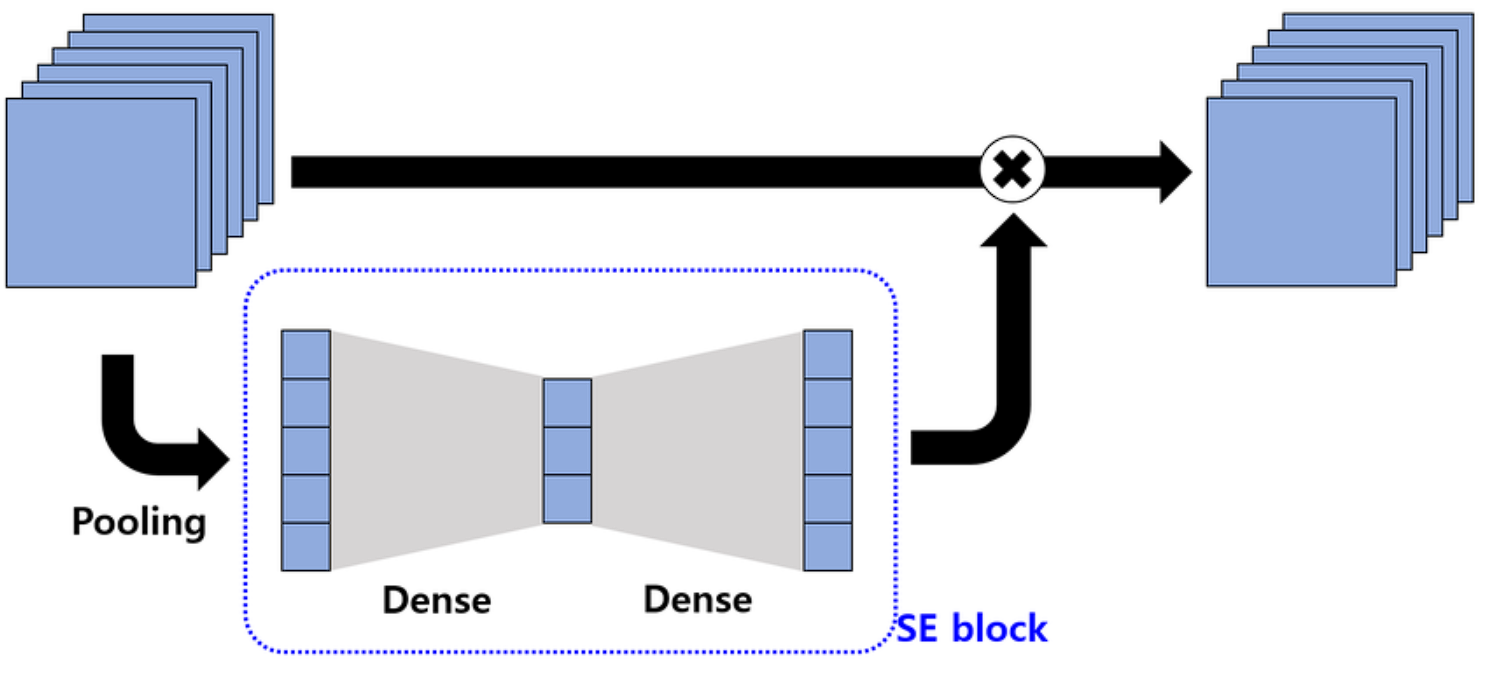

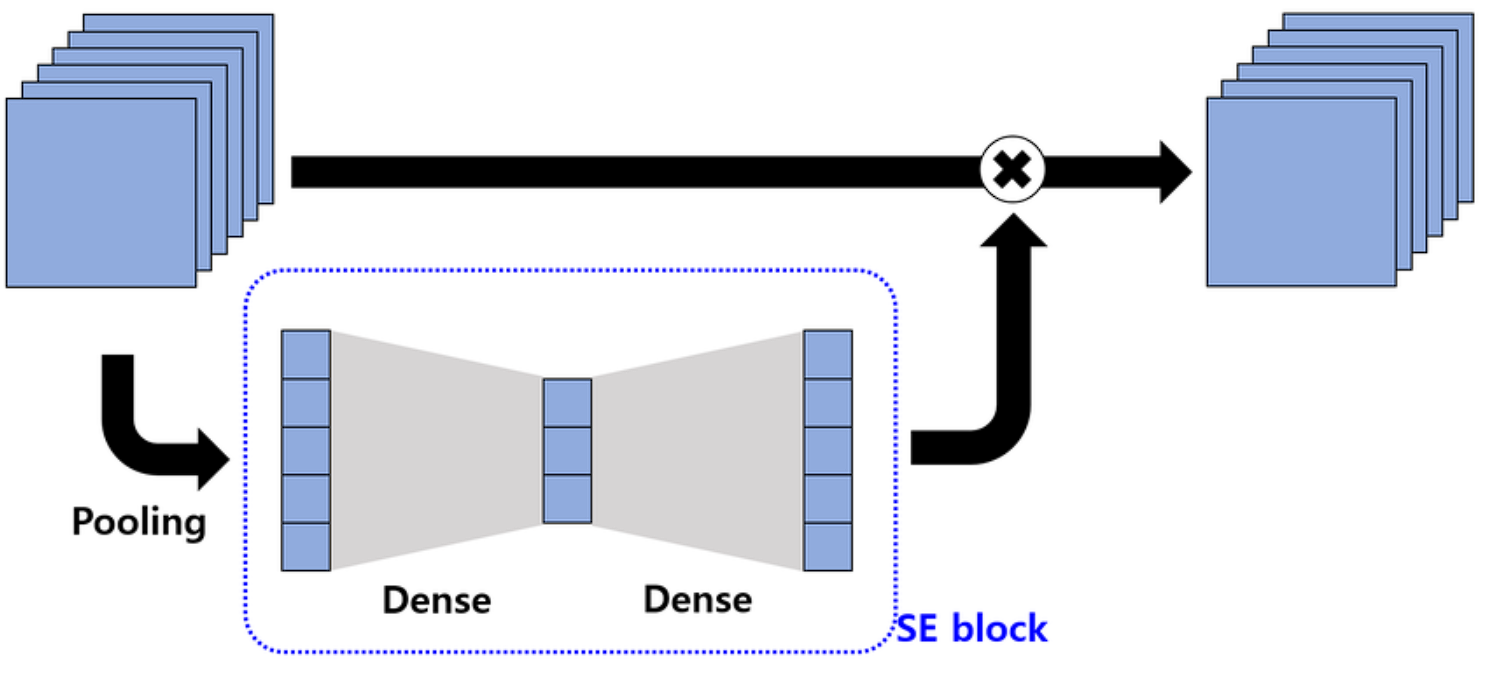

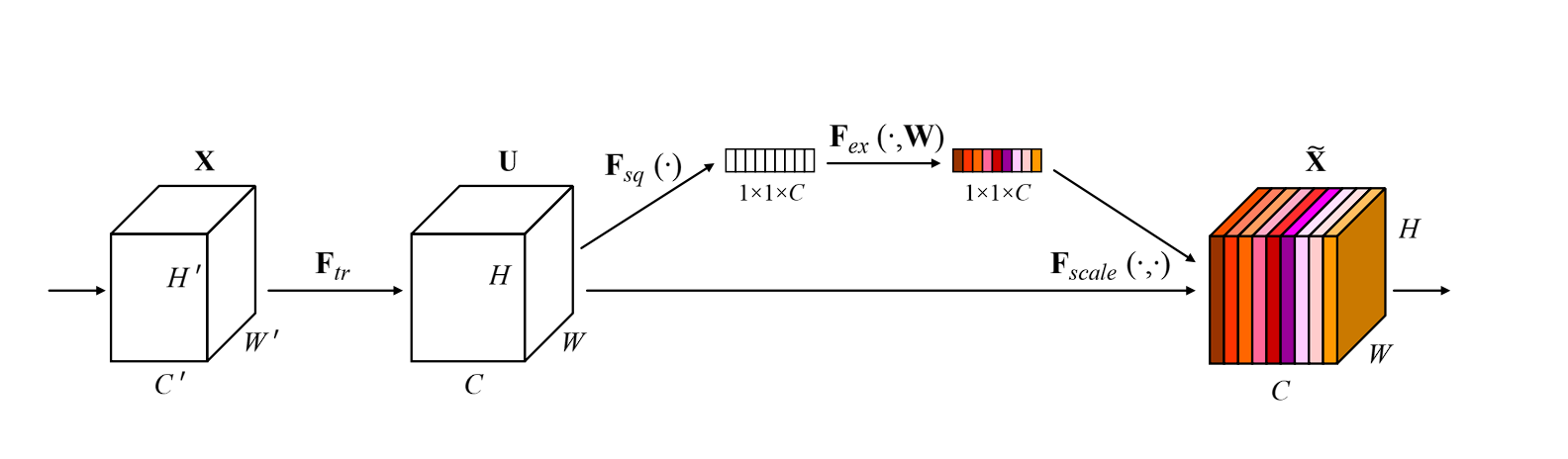

SE Community will be divided into three steps, squeeze, excite, and scale, right here is how they work:

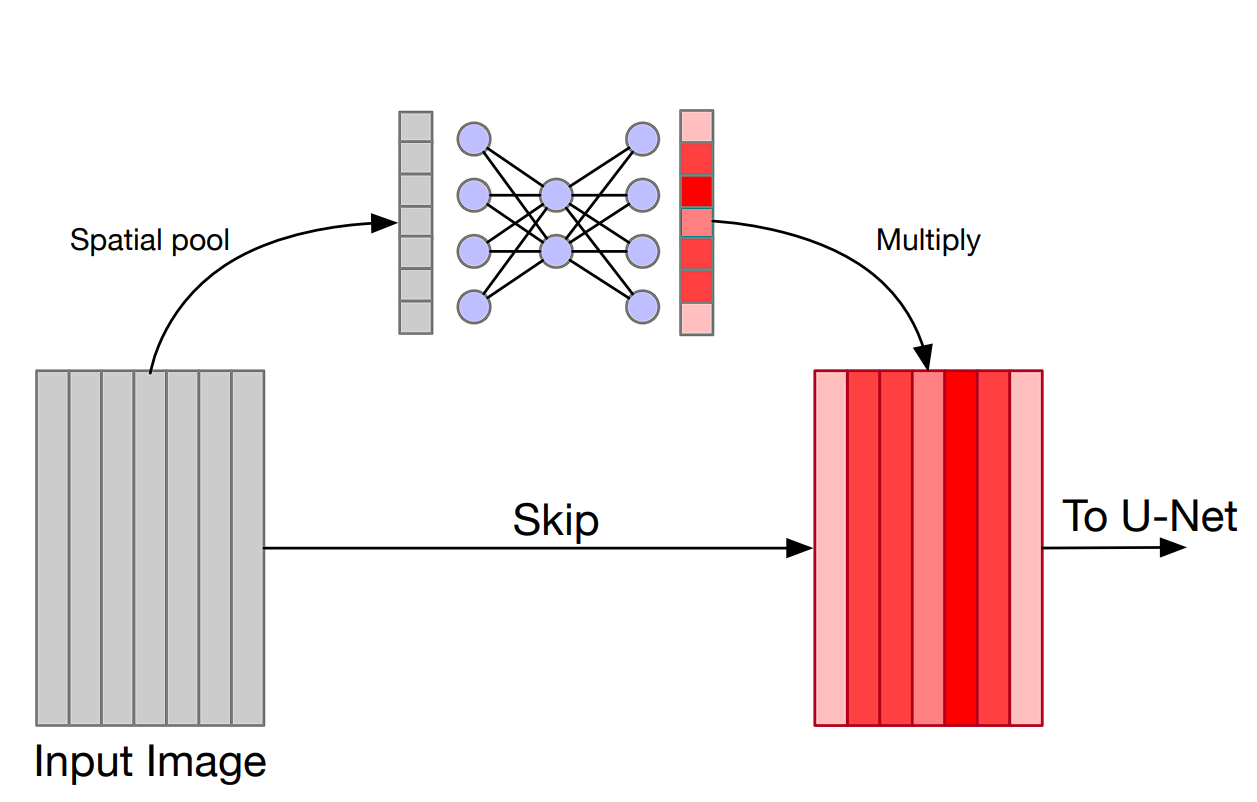

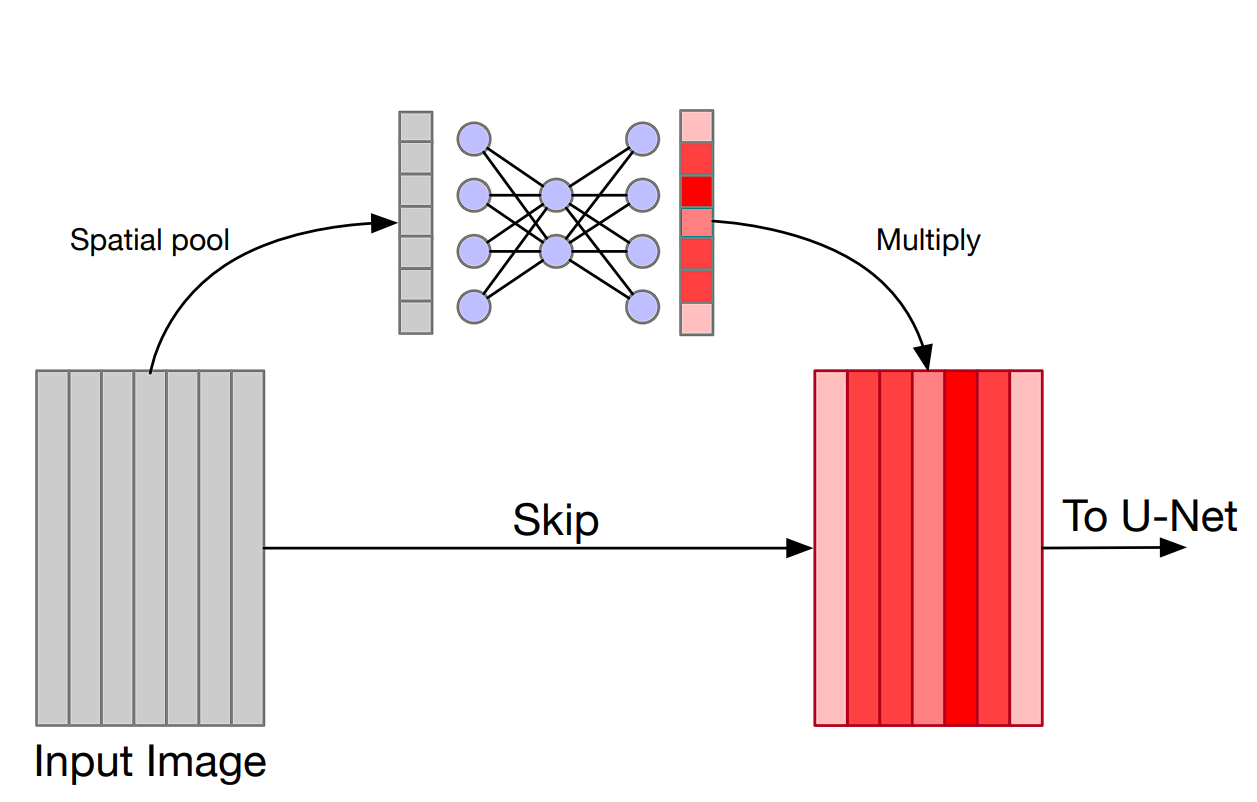

- Squeeze: This primary step within the community captures the worldwide data from every channel. It makes use of international common pooling to squeeze every channel of the characteristic map right into a single numeric worth. This worth represents the exercise of that channel.

- Excite: The second step is a small absolutely related neural community that analyzes the significance of every channel based mostly on the data captured within the earlier step. The output of the excitation step is a set of weights for every channel that tells what channel is necessary.

- Scale: On the finish, the weights are multiplied with the unique channels or characteristic map, scaling every channel in accordance with its significance. Channels that show to be necessary for the community are amplified, whereas the not necessary channel is suppressed and given much less significance.

General, that is an outline of how the SE community works. Now let’s deeper into the technical particulars.

How does SENet Work?

Squeeze Operation

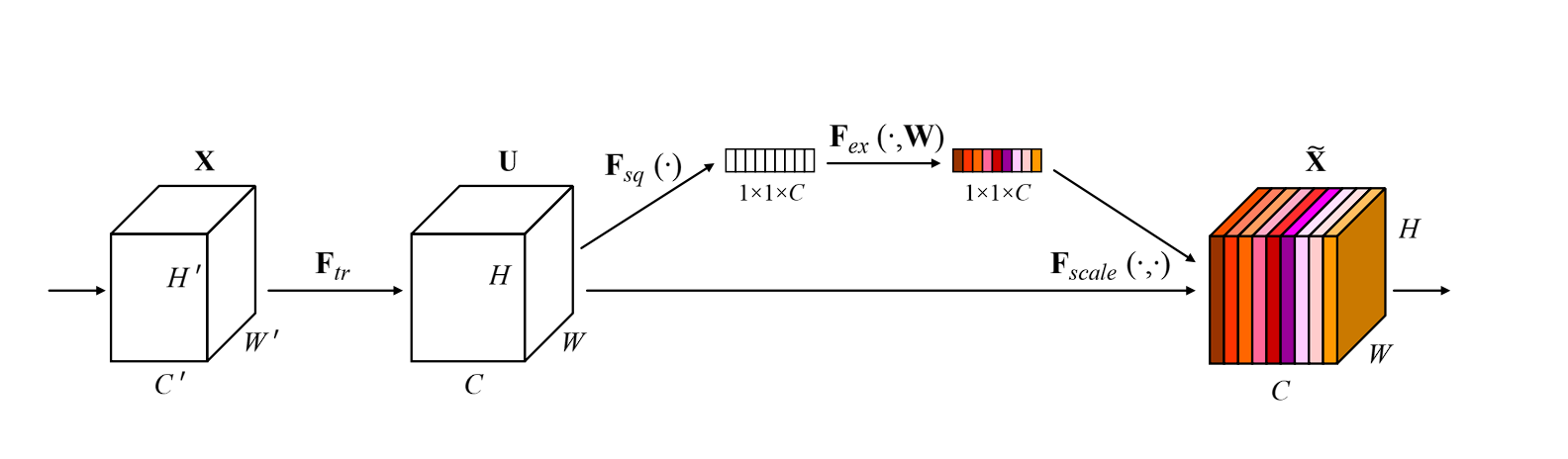

The Squeeze operation condenses the data from every channel right into a single vector utilizing international common pooling.

The worldwide common pooling (GAP) layer is an important step within the strategy of SENet, commonplace pooling layers (corresponding to max pooling) present in CNNs cut back the dimensionality of the enter whereas retaining essentially the most distinguished options, in distinction, GAP reduces every channel of the characteristic map to a single worth by taking the common of all components in that channel.

How GAP Aggregates Characteristic Maps

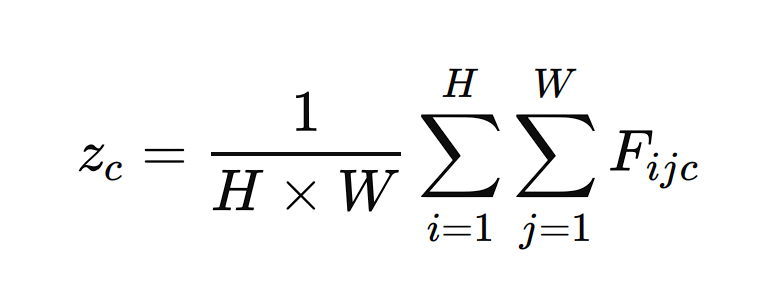

- Characteristic Map Enter: Suppose now we have a characteristic map F from a convolutional layer with dimensions H×W×C, the place H is the peak, W is the width, and C is the variety of channels.

- World Common Pooling: The GAP layer processes every channel independently. For every channel c within the characteristic map F, GAP computes the common of all components in that channel. Mathematically, this may be represented as:

Right here, zc is the output of the GAP layer for channel c, and Fijc is the worth of the characteristic map at place (I,j) for channel c.

Output Vector: The results of the GAP layer is a vector z with a size equal to the variety of channels C. This vector captures the worldwide spatial data of every channel by summarizing its contents with a single worth.

Instance: If a characteristic map has dimensions 7×7×512, the GAP layer will remodel it right into a 1×1×512 vector by averaging the values in every 7×7 grid for all 512 channels.

Excite Operation

As soon as the worldwide common pooling is completed on channels, leading to a single vector for every channel. The following step the SE community performs is excitation.

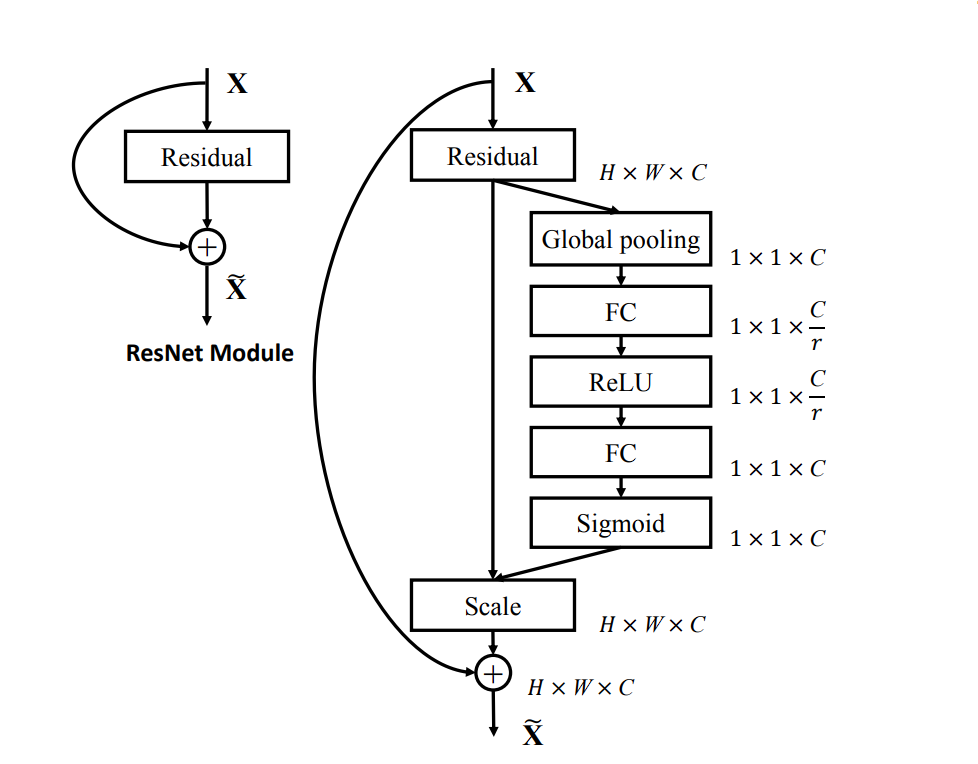

On this, utilizing a completely related Neural Community, channel dependencies are obtained. That is the place the necessary and fewer necessary channels are distinguished. Right here is how it’s carried out:

Enter vector z is the output vector from GAP.

The 2 absolutely related neural community layers cut back the dimensionality of the enter vector to a smaller measurement C/r, the place r is the discount ratio (a hyperparameter that may be adjusted). This dimensionality discount step helps in capturing the channel dependencies.

The primary layer is a ReLU (Rectified Linear Unit) activation operate that’s utilized to the output of the primary FC layer to introduce non-linearity

s= ReLU(s)

The second layer is one other absolutely related layer

Lastly, the Sigmoid activation operate is utilized to scale and smoothen out the weights in accordance with their significance. Sigmoid activation outputs a worth between 0 and 1.

w=σ(w)

Scale Operation

The Scale operation makes use of the output from the Excitation step to rescale the unique characteristic maps. First, the output from the sigmoid is reshaped to match the variety of channels, broadcasting w throughout dimensions H and W.

The ultimate step is the recalibration of the channels. That is completed by element-wise multiplication. Every channel is multiplied by the corresponding weight.

Fijk=wok⋅Fijk

Right here, Fijk is the worth of the unique characteristic map at place (i,j) in channel ok, and is the burden for channel ok. The output of this operate is the recalibrated characteristic map worth.

The Excite operation in SENet leverages absolutely related layers and activation features to seize and mannequin channel dependencies that generate a set of significance weights for every channel.

The Scale operation then makes use of these weights to recalibrate the unique characteristic maps, enhancing the community’s representational energy and enhancing efficiency on numerous duties.

Integration with Current Networks

Squeeze and Excite Networks (SENets) are simply adaptable and will be simply built-in into current convolutional neural community (CNN) architectures, because the SE blocks function independently of the convolution operation in no matter structure you’re utilizing.

Furthermore, speaking about efficiency and computation, the SE block introduces negligible added computational value and parameters, as now we have seen that it’s simply a few absolutely related layers and easy operations corresponding to GAP and element-wise multiplication.

These processes are low cost when it comes to computation. Nevertheless, the advantages in accuracy they supply are nice.

Some fashions the place SE Nets have been built-in into

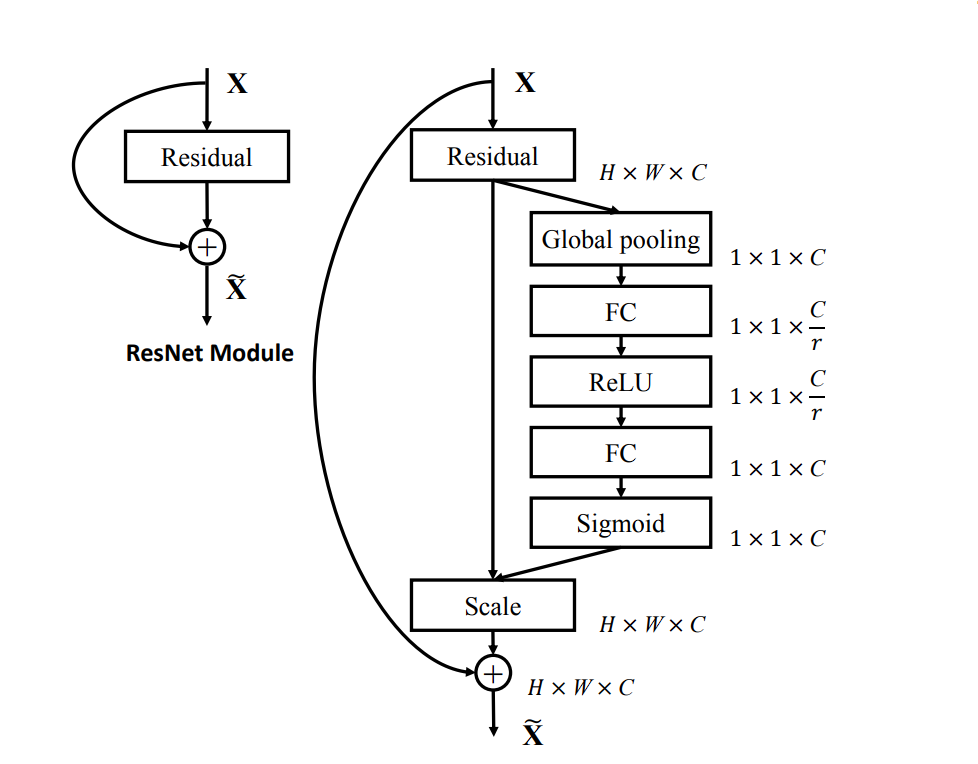

SE-ResNet: In ResNet, SE blocks are added to the residual blocks of ResNet. After every residual block, the SE block recalibrates the output characteristic maps. The results of including SE blocks is seen with the rise within the efficiency on picture classification duties.

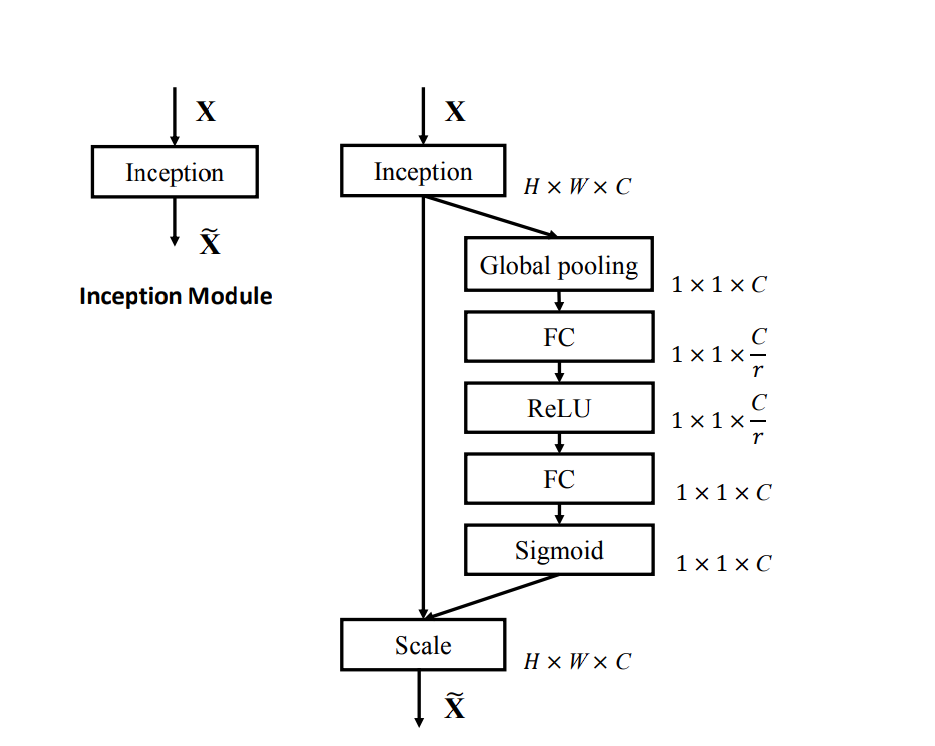

SE-Inception: In SE-Inception, SE blocks are built-in into the Inception modules. The SE block recalibrates the characteristic maps from the totally different convolutional paths inside every Inception module.

SE-MobileNet: In SE-MobileNet, SE blocks are added to the depthwise separable convolutions in MobileNet. The SE block recalibrates the output of the depthwise convolution earlier than passing it to the pointwise convolution.

SE-VGG: In SE-VGG, SE blocks are inserted after every group of convolutional layers. That’s, an SE block is added after every pair of convolutional layers adopted by a pooling layer.

Benchmarks and Testing

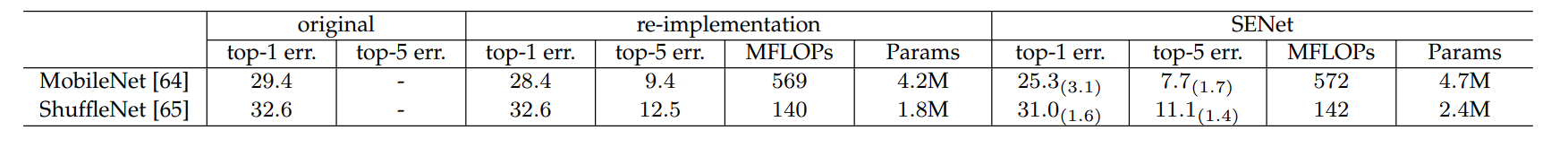

Cell Internet

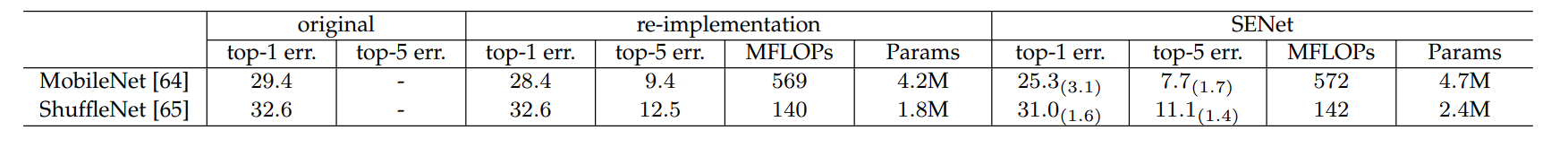

- The unique MobileNet has a top-1 error of 29.4%. After re-implementation, this error is diminished to twenty-eight.4%. Nevertheless, after we couple it with SENet, the top-1 error drastically reduces to 25.3%, exhibiting a major enchancment.

- The highest-5 error is 9.4% for the re-implemented MobileNet, which improves to 7.7% with SENet.

- Nevertheless, utilizing the SENet will increase the computation value from 569 to 572 MFLOPs with SENet, which is sort of good for the accuracy enchancment achieved.

ShuffleNet

- The unique ShuffleNet has a top-1 error of 32.6%. The re-implemented model maintains the identical top-1 error. When enhanced with SENet, the top-1 error reduces to 31.0%, exhibiting an enchancment.

- The highest-5 error is 12.5% for the re-implemented ShuffleNet, which improves to 11.1% with SENet.

- The computational value will increase barely from 140 to 142 MFLOPs with SENet.

In each MobileNet and ShuffleNet fashions, the addition of the SENet block considerably improves the top-1 and top-5 errors.

Advantages of SENet

Squeeze and Excite Networks (SENet) supply a number of benefits. Listed here are a few of the advantages we will see with SENet:

Improved Efficiency

SENet improves the accuracy of picture classification duties by specializing in the channels that contribute essentially the most to the detection activity. This is rather like including an consideration mechanism to channels (SE blocks present perception into the significance of various channels by assigning weights to them). This leads to elevated illustration by the community, as the higher layers are centered extra and additional improved.

Negligible computation overhead

The SE blocks introduce a really small variety of further parameters compared to scaling a mannequin. That is potential as a result of SENet makes use of World common pooling that summarizes the mannequin channel-wise and is a few easy operations.

Simple Integration with current fashions

SE blocks seamlessly combine into current CNN architectures, corresponding to ResNet, Inception, MobileNet, VGG, and DenseNet.

Furthermore, these blocks will be utilized as many occasions as desired:

- In numerous elements of the community

- From the sooner layers to the ultimate layers of the community

- Adapting to steady various duties carried out all through the deep studying mannequin you combine SE into

Sturdy Mannequin

Lastly, SENet makes the mannequin tolerant in direction of noise, as a result of it downgrades the channels that is likely to be contributing negatively to the mannequin efficiency. Thus, making the mannequin finally generalize on the given activity higher.

What’s Subsequent with Squeeze and Excite Networks

On this weblog, we seemed on the structure and advantages of Squeeze and Excite Networks (SENet), which function an added increase to the already developed mannequin. That is potential because of the idea of “squeeze” and “excite” operations which makes the mannequin concentrate on the significance of various channels in characteristic maps, that is totally different from commonplace CNNs which use fastened weights throughout all channels and provides equal significance to all of the channels.

We then seemed in-depth into the squeeze, excite, and scale operation. The place the SE block first performs a world common pooling layer, that compresses every channel right into a single worth. Then the absolutely related layers and activation features mannequin the connection between channels. Lastly, the dimensions operation rescales the significance of every channel by multiplying the output weight from the excitation step.

Moreover, we additionally checked out how SENet will be built-in into current networks corresponding to ResNet, Inception, MobileNet, VGG, and DenseNet with minimally elevated computations.

General, the SE block leads to improved efficiency, robustness, and generalizability of the present mannequin.