YOLOv10 is the newest development within the YOLO (You Solely Look As soon as) household of object detection fashions, identified for real-time object detection. The YOLOv10 mannequin pushes the performance-efficiency boundaries, constructing on the success of its predecessors. The brand new thrilling enhancements promise to rework real-time object detection throughout varied purposes.

Researchers have performed intensive experiments on the YOLO fashions, reaching notable progress. Nevertheless, YOLOv10 goals to advance earlier variations’ post-processing and mannequin structure. The result’s a brand new era of the YOLO sequence for real-time end-to-end object detection.

Prepare for a deep dive into YOLOv10. We’ll study the architectural modifications, evaluate its effectivity with different YOLO fashions, uncover its sensible makes use of, and show how one can apply it for inference and coaching in your knowledge.

About us: Viso Suite supplies pc imaginative and prescient infrastructure for enterprises. As the one end-to-end resolution, Viso Suite consolidates the complete utility pipeline into a strong interface. Study extra about how firms worldwide are utilizing Viso Suite for on a regular basis enterprise options.

YOLOv10: An Evolution of Object Detection

The YOLO sequence has been predominant over time within the subject of real-time object detection. Every YOLO mannequin is available in a number of sizes with a special stability of accuracy and velocity. Beneath are the standard sizes for a YOLO mannequin, together with the newest YOLOv10.

- YOLO-N (Nano)

- YOLO-S (Small)

- YOLO-M (Medium)

- YOLO-B (Balanced)

- YOLO-L (Massive)

- YOLO-X (X-Massive)

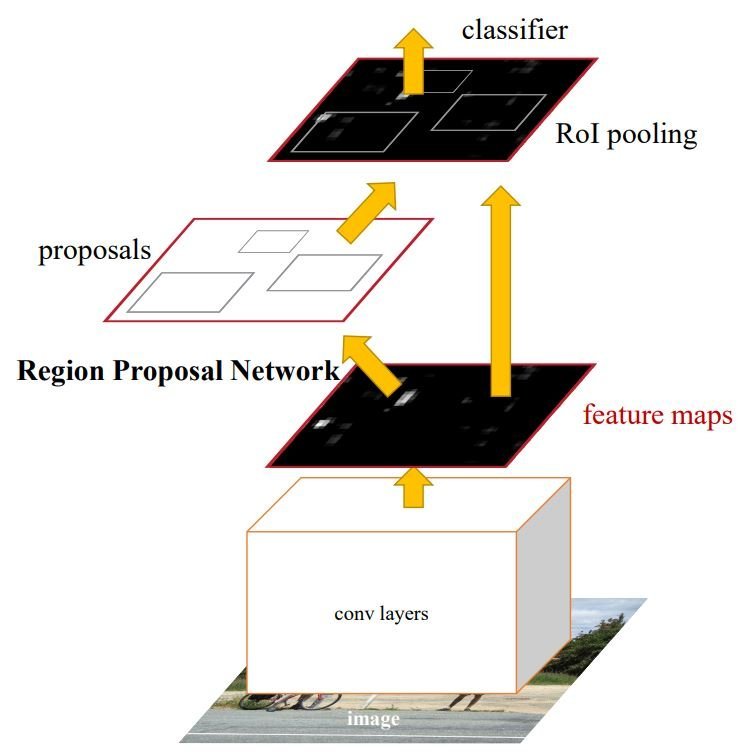

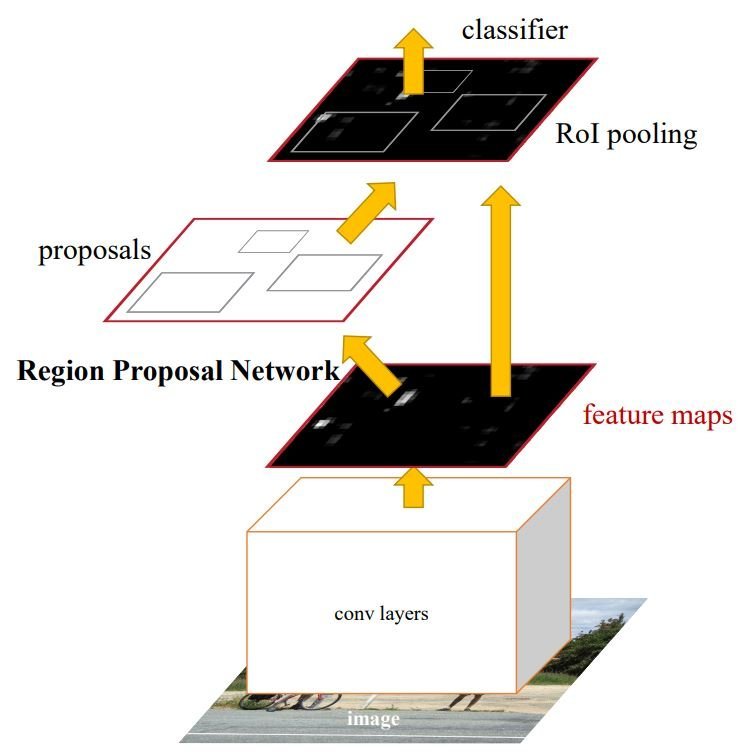

Object detection, particularly in real-time has all the time been an necessary space of analysis in pc imaginative and prescient. The aim of object detection in real-time is to find and establish objects in a picture beneath low latency. Researchers usually make use of variations of a Convolution Neural Community (CNN) like R-CNN (Regional CNN), Quick R-CNN, Sooner R-CNN, and Masks R-CNN.

Nevertheless, YOLO fashions make the most of a extra advanced structure than that, providing a stability between efficiency and effectivity for real-time object detection. Let’s recap these fundamentals earlier than diving into the specifics of YOLOv10.

Background

The earliest object detection technique was the sliding window method the place a fixed-size bounding field strikes throughout the picture till we discover the item of curiosity. As that is resource-intensive, researchers developed extra environment friendly approaches, corresponding to Sooner R-CNN, one of many earliest approaches transferring towards real-time object detection.

The thought behind Sooner R-CNN is to make use of R-CNN which goals to optimize the sliding window method with a area proposal community. This algorithm would suggest bounding packing containers the place the item is extra more likely to be. Then Convolutional layers extract function maps which might be used to categorise the objects throughout the bounding packing containers. Moreover, Sooner R-CNN consists of optimization to extend velocity and effectivity.

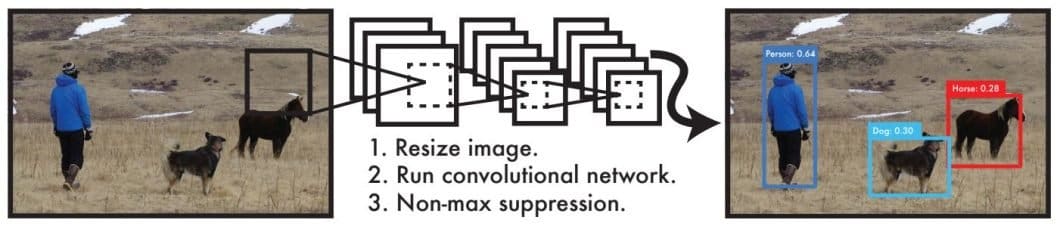

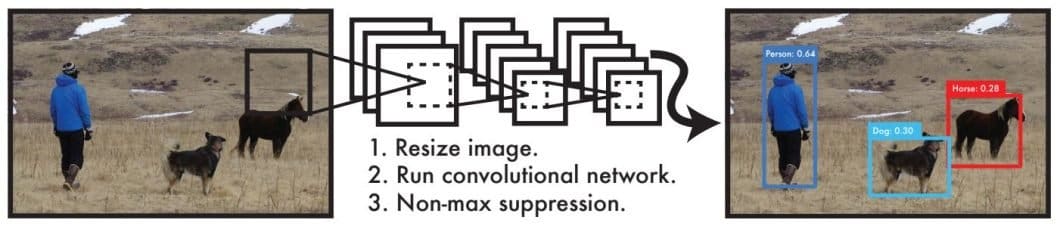

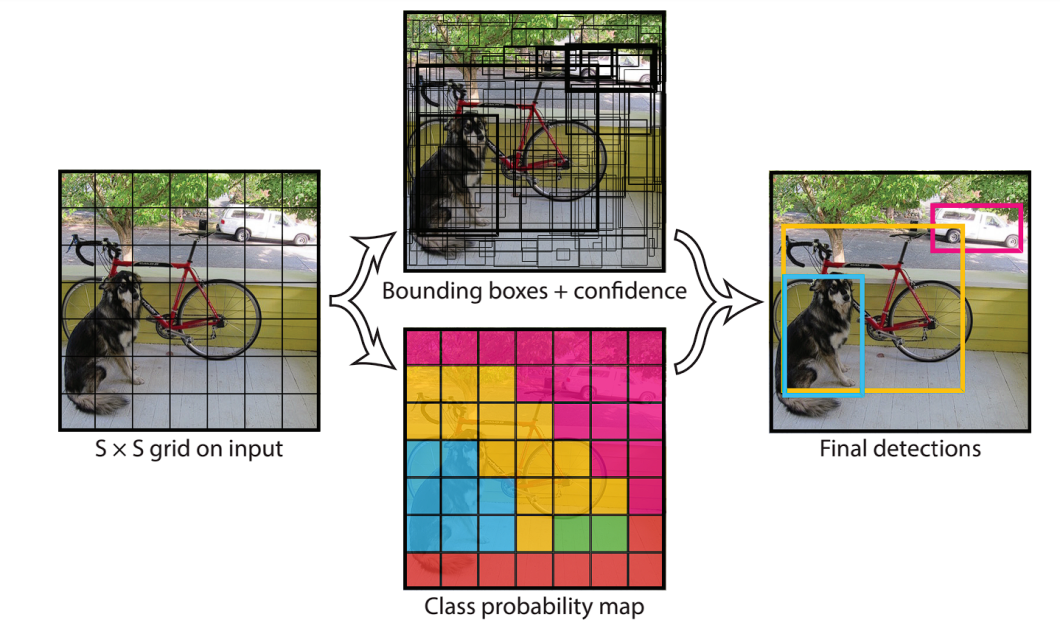

Nevertheless, the YOLO fashions include a special method in thoughts. These fashions make the most of a single-shot technique, the place each detection and classification occur in a single step. YOLO fashions, together with YOLOv10, body object detection as a regression downside, the place a single neural community predicts the bounding packing containers and the lessons in a single analysis.

The YOLO detection system works in a pipeline of a single community, thus it’s optimized for detection efficiency.

- The pipeline first resizes the picture to the enter measurement of the YOLO mannequin.

- Runs a Convolutional Neural Community on the picture.

- The pipeline then makes use of Non-max suppression (NMS) to optimize the CNN’s detections by making use of confidence thresholding.

Non-maximum suppression (NMS) is a method utilized in object detection to take away duplicate bounding packing containers and choose solely the related ones. By tuning this postprocessing method and different methods like optimization, knowledge augmentation, and architectural modifications, researchers create completely different variations of YOLO fashions. As we’ll see later, the YOLOv10’s most notable evolution is said to the NMS method.

Benchmarks

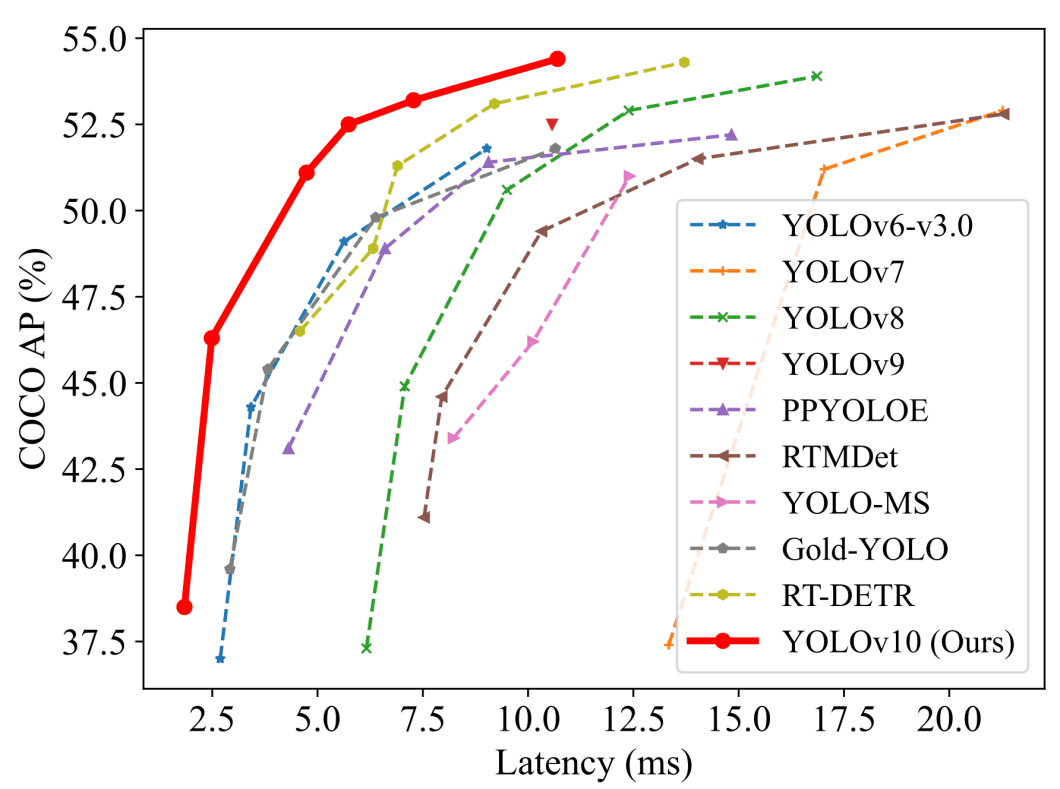

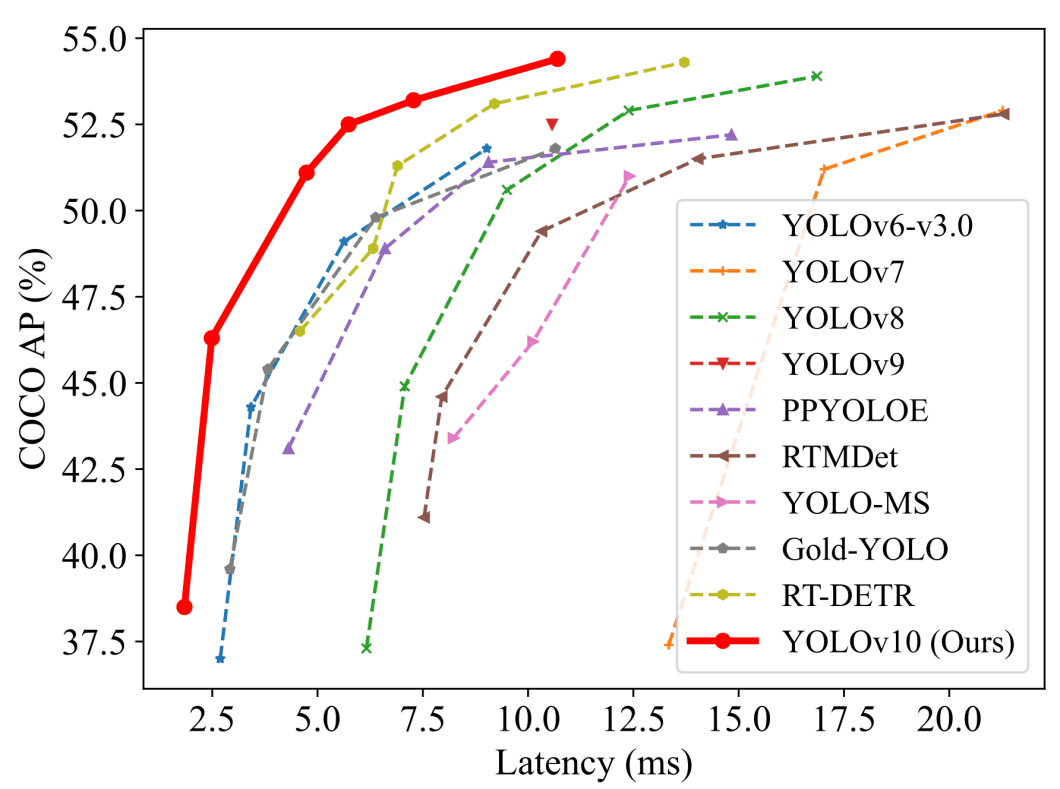

To know the developments in YOLOv10, we are going to begin by evaluating its benchmark outcomes to these of earlier YOLO variations. The 2 predominant efficiency measures used with real-time object-detection fashions are normally common precision (AP) or mAP (imply AP), and latency. We measure these metrics on benchmark datasets just like the COCO dataset.

Whereas this comparability reveals solely metrics like latency and AP, we are able to see how the YOLOv10 mannequin considerably improves these measures. We have to have a look at a extra detailed comparability to know the complete image. This comparability will present different metrics to examine the areas the place YOLOv10 excels.

| Mannequin | Params (M) | FLOPs (G) | APval (%) | Latency (ms) | Latency (Ahead) (ms) |

|---|---|---|---|---|---|

| YOLOv6-3.0-S | 18.5 | 45.3 | 44.3 | 3.42 | 2.35 |

| YOLOv8-S | 11.2 | 28.6 | 44.9 | 7.07 | 2.33 |

| YOLOv9-S | 7.1 | 26.4 | 46.7 | – | – |

| YOLOv10-S | 7.2 | 21.6 | 46.3 / 46.8 | 2.49 | 2.39 |

| YOLOv6-3.0-M | 34.9 | 85.8 | 49.1 | 5.63 | 4.56 |

| YOLOv8-M | 25.9 | 78.9 | 50.6 | 9.50 | 5.09 |

| YOLOv9-M | 20.0 | 76.3 | 51.1 | – | – |

| YOLOv10-M | 15.4 | 59.1 | 51.1/51.3 | 4.74 | 4.63 |

| YOLOv8-L | 43.7 | 165.2 | 52.9 | 12.39 | 8.06 |

| YOLOv10-L | 24.4 | 120.3 | 53.2 / 53.4 | 7.28 | 7.21 |

| YOLOv8-X | 68.2 | 257.8 | 53.9 | 16.86 | 12.83 |

| YOLOv10-X | 29.5 | 160.4 | 54.4 | 10.70 | 10.60 |

As proven within the desk, we are able to see how the YOLOv10 achieves state-of-the-art efficiency throughout varied scales. YOLOv10 in comparison with baseline fashions just like the YOLOv8 has a spread of enhancements. The S/ M/ L/ X sizes obtain 1.4%/0.5%/0.3%/0.5% AP enchancment with 36%/41%/44%/57% fewer parameters and 65%/ 50%/ 41%/ 37% decrease latencies. Importantly, YOLOv10 achieves superior trade-offs between accuracy and computational value.

These enhancements towards different YOLO variations just like the YOLOv9, YOLOv8, and YOLOv6, point out the effectiveness of the YOLOv10’s architectural design. Subsequent, let’s examine and discover the architectural design of YOLOv10.

The Structure Of YOLOv10

The structure design in YOLO fashions is a basic problem due to its impact on accuracy and velocity. Researchers explored completely different design methods for YOLO fashions, however the detection pipeline of most YOLO fashions stays the identical. There are two elements to the pipeline.

- Ahead course of

- NMS postprocessing

Moreover, YOLO structure design normally consists of three predominant parts.

- Spine: Used for function extraction making a illustration of the picture.

- Neck: This part, launched in YOLOv4, is the bridge between the spine and the pinnacle. It combines options throughout completely different scales from the extracted options.

- Head: That is the place the classification occurs, it predicts the bounding packing containers and the lessons of the objects.

With that in thoughts, we are going to have a look at the important thing enhancements and architectural design of the YOLOv10.

Key Enhancements

Since YOLOs body object detection as a regression downside, the mannequin divides the picture right into a grid of cells.

Every cell is liable for predicting a number of bounding packing containers. In YOLOs, every ground-truth object (the precise object within the coaching picture) is related to a number of predicted bounding packing containers.

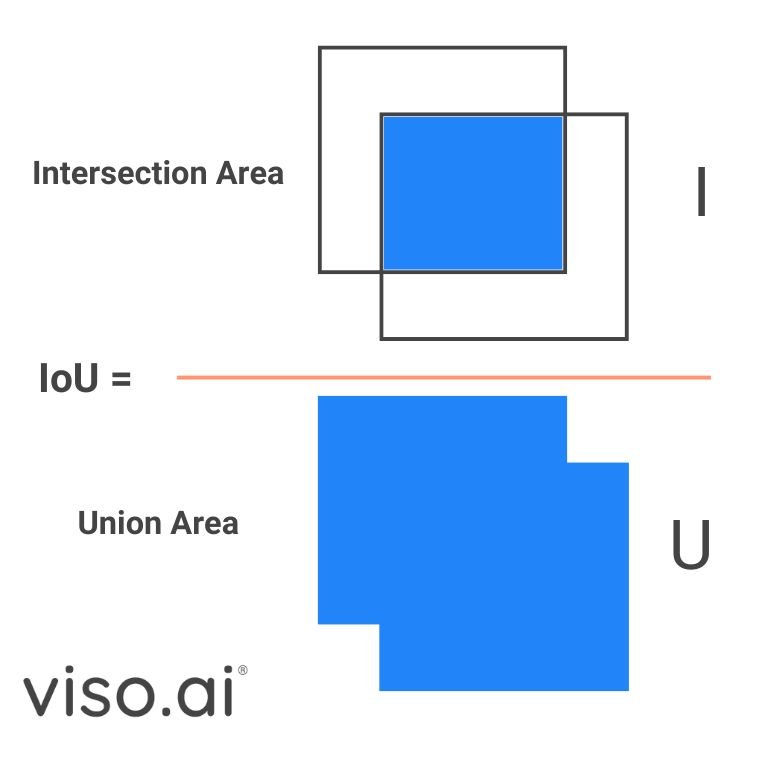

This one-to-many label task technique has proven robust efficiency however requires Non-Most Suppression (NMS) throughout inference. NMS depends on Intersection over Union (IoU), a metric to calculate the overlap between the expected bounding field and the bottom reality. By setting an IoU threshold, NMS can filter out redundant packing containers.

Nevertheless, this post-processing step slows down the inference velocity, stopping YOLOs from reaching their optimum efficiency. The YOLOv10 eliminates the NMS postprocessing step with NMS-Free coaching. The researchers make the most of a constant twin assignments coaching technique that effectively reduces the latency.

Constant twin task permits the mannequin to make a number of predictions on an object, with a confidence rating for every. Throughout inference, we are able to choose the bounding field with the best IOU or confidence, lowering inference time with out sacrificing accuracy.

Moreover, YOLOv10 consists of enhancements within the optimization and structure of the mannequin.

- Holistic Design: This refers back to the optimization executed to numerous parts of the mannequin, the holistic method maximizes the effectivity and accuracy of every. We’ll delve deeper into the specifics of this design later.

- Improved Structure and Capabilities: This consists of modifications to the convolutional layers, and including partial self-attention modules to boost effectivity with out risking computational value.

Subsequent, we are going to have a look at the parts of the YOLOv10 mannequin, exploring the enhancements.

Elements

YOLOv10 parts construct upon the success of earlier YOLO variations, retaining a lot of their construction whereas introducing key improvements. Throughout coaching, YOLOs normally use a one-to-many task technique which wants NMS postprocessing. Different earlier works have explored issues like one-to-one matching which assigns just one prediction to every object, thus eliminating NMS, however this launched extra inference overhead.

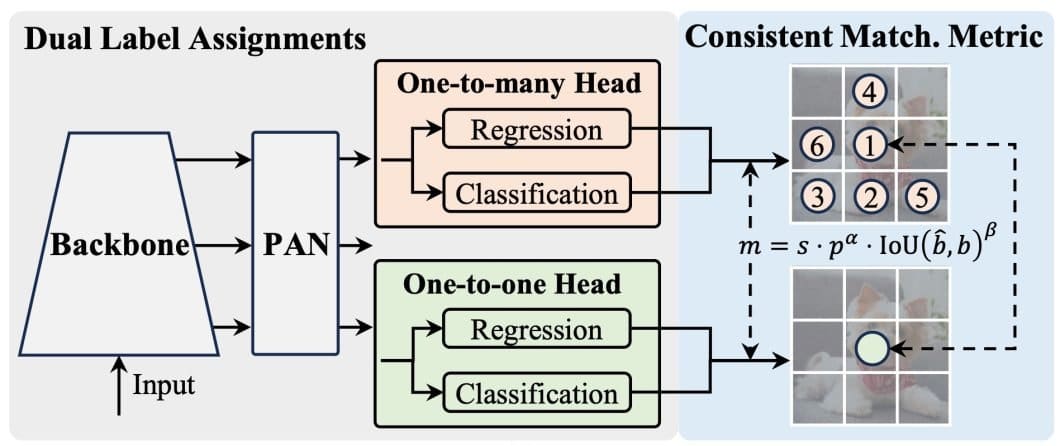

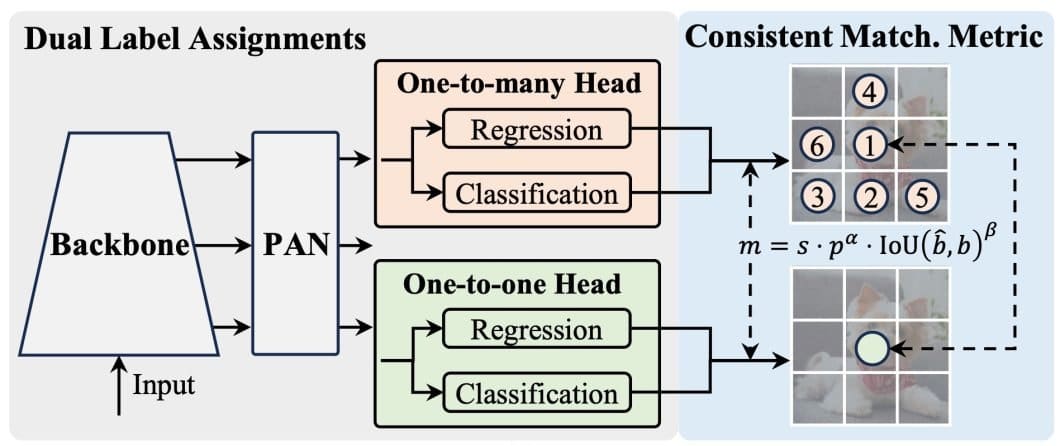

The YOLOv10 introduces the dual-label task and constant matching metric. This combines the perfect of the one-to-one and the one-to-many label assignments and achieves excessive efficiency and effectivity.

As proven within the determine above, the YOLOv10 provides a further one-to-one head to the structure of YOLOs. This head retains the identical construction and optimization as the unique one-to-many head.

- Whereas coaching the mannequin, each heads are collectively optimized giving the spine and the neck wealthy supervision.

- The wealthy supervision comes from the power of the one-to-many task technique to permit the mannequin to contemplate a number of potential bounding packing containers for every floor reality object. This provides the spine and neck fashions extra data to be taught from.

- The constant matching metric optimizes the one-to-one head supervision to the route of the one-to-many head. A metric measures the IOU settlement between each heads and aligns their predictions.

- Throughout inference, the one-to-many head is discarded and we use the one-to-one head to make predictions. YOLOv10 additionally adopts the top-one choice technique, in the end giving it much less coaching time and no extra inference prices.

The spine and neck are additionally necessary parts in any YOLO mode. Particularly, in YOLOv10 the researchers employed an enhanced model of CSPNet to do function extraction. Additionally they used PAN layers to mix options from completely different scales throughout the neck.

Holistic Design-Effectivity-Pushed

The YOLOv10 goals to optimize the parts from effectivity and accuracy views. Beginning with the efficiency-driven mannequin design, the YOLOv10 applies optimization to the downsampling layers, the essential constructing block phases, and the pinnacle.

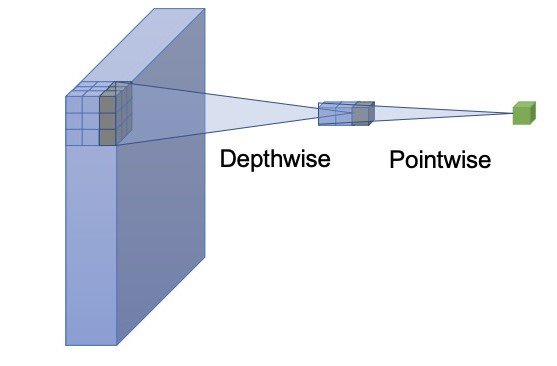

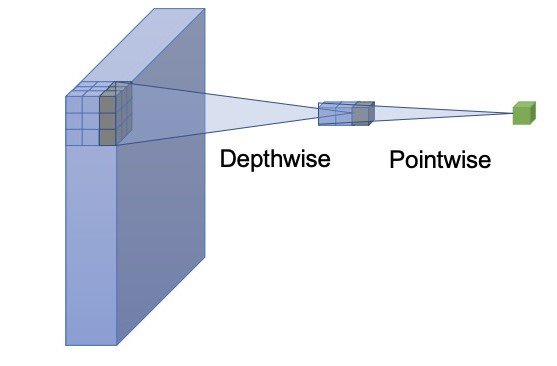

The primary optimization is the light-weight classification head utilizing depth-wise separable convolution. YOLOs normally use a regression and a classification part. A light-weight classification head will scale back inference time and never vastly damage efficiency. Depth-wise separable convolution consists of a depthwise and a pointwise community, the one adopted in YOLOv10 has a kernel measurement of three×3 adopted by a 1×1 convolution.

The second optimization is the spatial-channel decoupled downsampling. YOLOs usually use common 3×3 customary convolutions with a stride of two. As a substitute, the YOLOv10 makes use of the pointwise convolution to regulate the channel dimensions and the depthwise for spatial downsampling. This method separates the 2 operations resulting in diminished computational value and parameter depend.

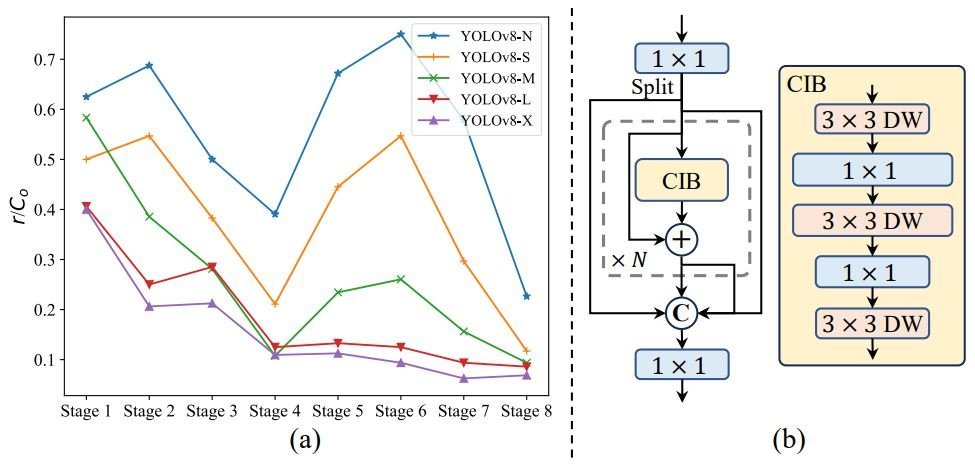

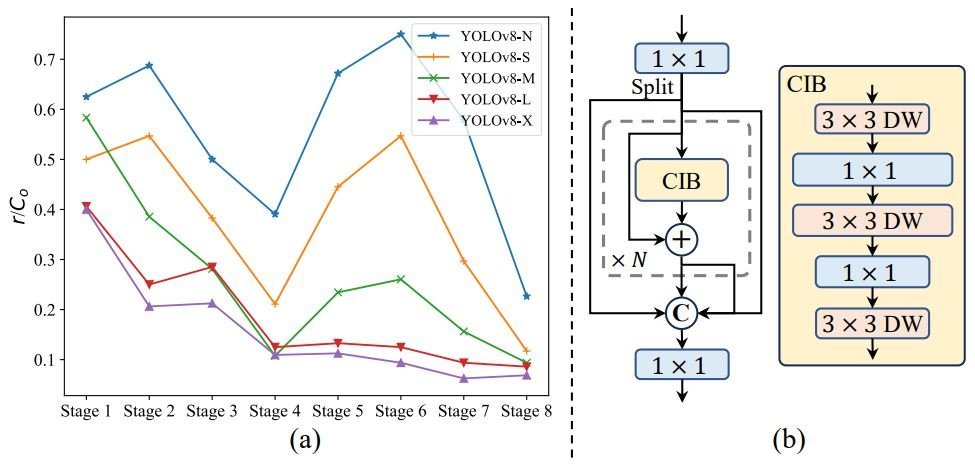

Moreover, the YOLOv10 makes use of a 3rd optimization for effectivity, the rank-guided block design. YOLOs normally use the identical fundamental constructing blocks for all phases. Thus, the researchers behind YOLOv10 introduce an intrinsic rank metric to investigate the redundancy of mannequin phases.

The analyses present that deep phases and enormous fashions are vulnerable to extra redundancy, half (a) of the determine above. This causes inefficiency and suboptimal efficiency.

To handle this, they introduce the rank-guided block design:

- Compact inverted block (CIB): Makes use of cost-effective depthwise convolutions for spatial mixing and pointwise convolutions for channel mixing, half (b) of the determine above.

- Rank-guided block allocation: Type all phases of a mannequin based mostly on their intrinsic ranks in ascending order. Moreover, they change redundant blocks with CIBs in phases the place it doesn’t have an effect on efficiency.

Holistic Design-Accuracy-Pushed

Effectivity and accuracy are the most important trade-offs in object detection, however the YOLOv10 holistic method minimizes this trade-off. The researchers discover large-kernel convolution and self-attention for the accuracy-driven design, boosting efficiency with minimal prices.

The primary accuracy-driven optimization is the large-kernel convolution. Utilizing massive kernel convolutions can improve the mannequin’s receptive subject bettering object detection. Nevertheless, utilizing these convolutions in all phases could cause issues detecting small objects or be inefficient in high-resolution phases.

Due to this fact, the YOLOv10 introduces utilizing large-kernel depthwise convolutions in compact inverted block (CIB), solely within the deeper phases and with small mannequin scales. Particularly, the researchers improve the kernel measurement from 3×3 to 7×7 within the second depthwise convolution of the CIB.

Moreover, they use the structural reparameterization method by introducing a further 3×3 depthwise convolution department which mitigates potential optimization points and retains the advantages of smaller kernels.

This optimization enhances the mannequin’s skill to seize high-quality particulars and contextual data with out sacrificing effectivity or value throughout inference.

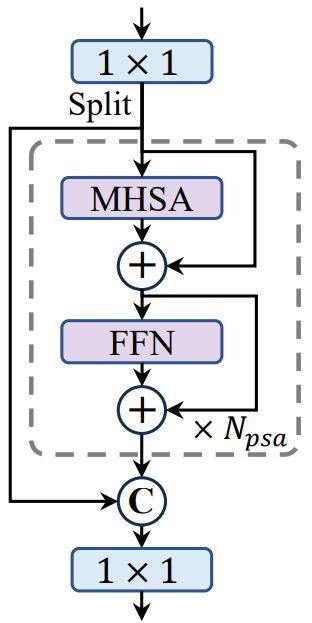

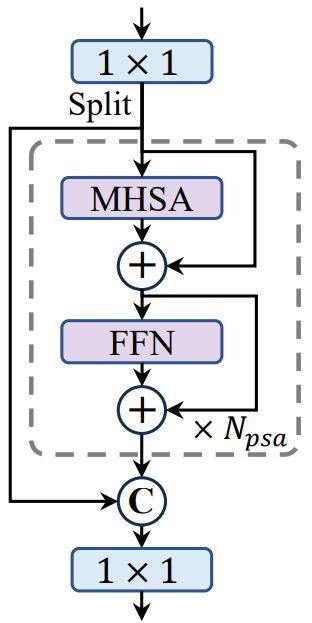

Lastly, the YOLOv10 employs a further accuracy-driven optimization, the partial self-attention (PSA). Self-attention is extensively utilized in visible duties for its highly effective world modeling capabilities however comes with excessive computational prices. To handle this, the researchers of YOLOv10 introduce an environment friendly design for the partial self-attention module.

Particularly, they evenly divide the options throughout channels into two elements and solely apply self-attention (NPSA blocks) to at least one half. Moreover, they optimize the eye mechanism by lowering the scale of question and key and changing LayerNorm with BatchNorm for quicker inference. This reduces value and retains the worldwide modeling advantages.

Moreover, PSA is simply utilized after the stage with the bottom decision to regulate the computational overhead, resulting in improved mannequin efficiency.

Implementation And Functions Of YOLOv10

The accuracy and efficiency-driven design is an evolutionary step for the YOLO household. This complete inspection of parts resulted in YOLOv10, a brand new era of real-time, end-to-end object detection fashions.

Whereas real-time object detection has existed since Sooner R-CNN, minimizing latency has all the time been a key purpose. The latency of a mannequin is an important think about figuring out its sensible purposes. Excessive-integrity purposes must have optimum performances in effectivity and accuracy, and that’s what YOLOv10 offers us.

We’ll discover the YOLOv10 code, after which have a look at the way it can evolve real-world purposes.

YOLOv10 Inference-HuggingFace

Most YOLOs are simply carried out with Python code by way of the Ultralytics library. This library offers us the choice to coach and fine-tune YOLO fashions on our knowledge, or just run inference. Nevertheless, YOLOv10 remains to be not totally built-in into the Ultralytics library. We are able to nonetheless attempt the YOLOv10 and use its code by way of the obtainable Colab pocket book or the HuggingFace areas.

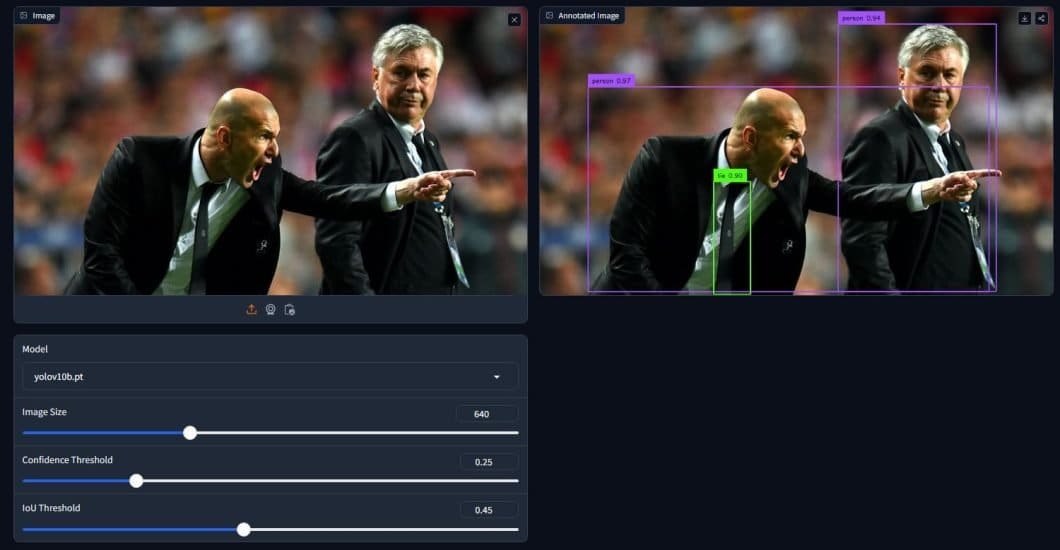

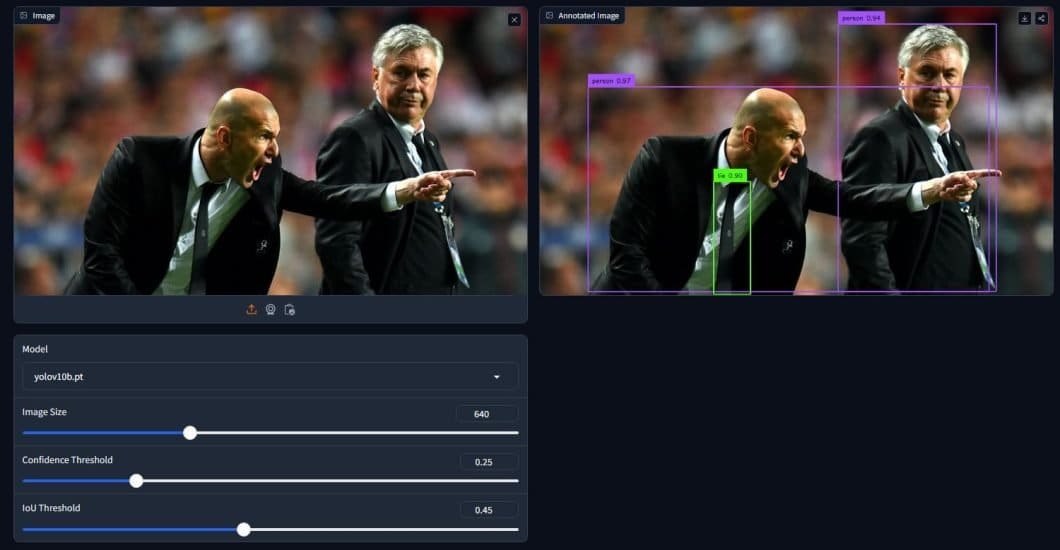

Let’s begin by trying out the HuggingFace area.

Utilizing one of many examples obtainable, we are able to see how the YOLOv10 can shortly generate predictions. We are able to additionally use the obtainable choices to check and check out varied settings and see how they differ. Within the instance above, we’re utilizing the YOLOv10-base mannequin, with a picture measurement of 640×640. Moreover, we have now the boldness and IoU thresholds.

Whereas the IoU threshold received’t maintain many advantages throughout inference, we have now learnt its significance throughout coaching. Then again, the boldness threshold is helpful throughout inference, particularly for advanced pictures, a better worth makes extra correct predictions however general fewer predictions, and the other is true.

Inference-Command line Interface (CLI)

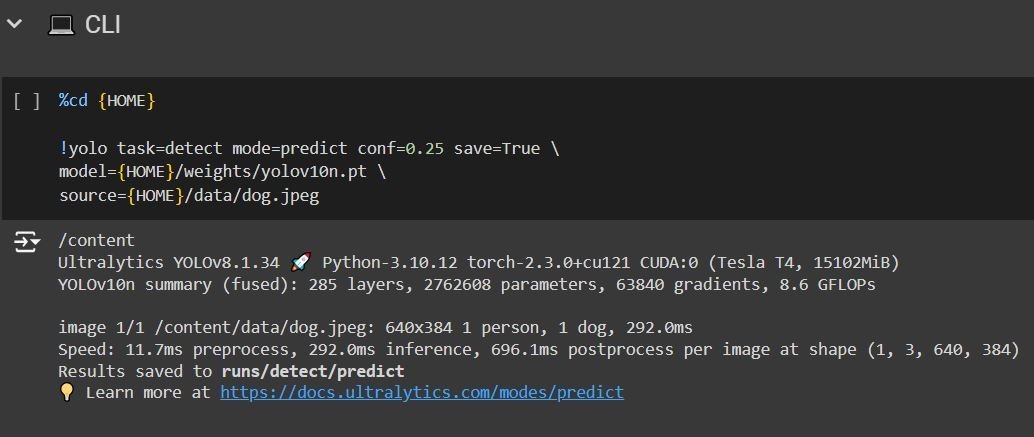

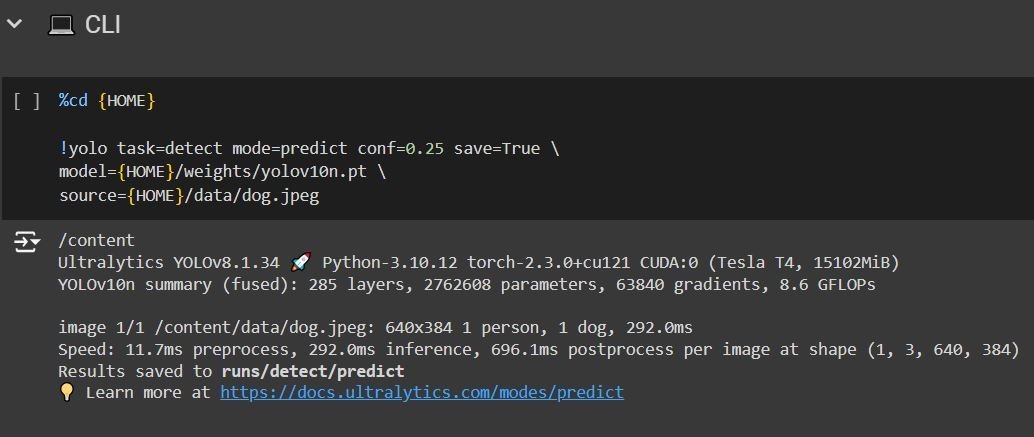

Moreover, we are able to delve into the code for YOLOv10 by way of the Colab pocket book. The pocket book tutorial is fairly clear and offers you choices like working inference utilizing the command line interface (CLI), or the Python SDK, in addition to an choice to coach on customized knowledge.

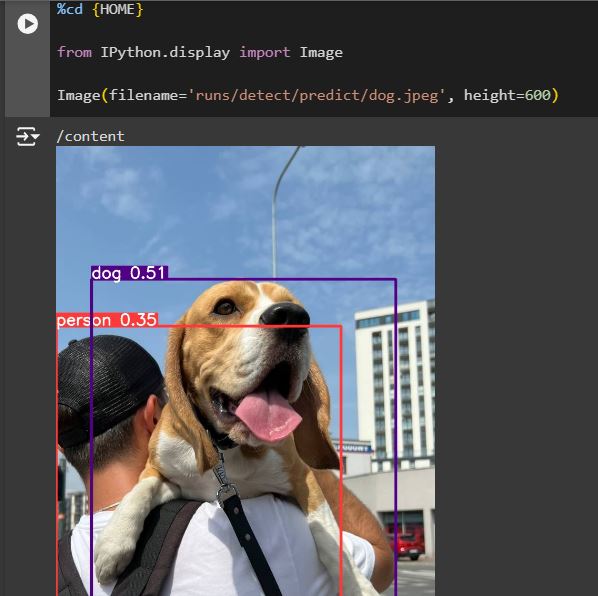

After working all of the earlier code blocks, you’ll have to run them as they’re as a result of they supply the mandatory setup to make use of YOLOv10. Now you possibly can attempt the CLI inference, the above code makes use of the yolov10-nano mannequin, makes use of a confidence threshold of 0.25, and uploads a picture from the information supplied by the pocket book.

If we need to make inferences on completely different mannequin sizes, a customized picture, or regulate the boldness threshold we are able to merely do:

%cd {HOME} #Navigate to house listing

!yolo activity=detect mode=predict conf=0.25 save=True # utilizing the !yolo command to run cli inference. Outline the duty as prediction, and use the predict mannequin, regulate conf worth as wanted.

mannequin={HOME}/weights/yolov10l.pt # Altering the letter after YOLOv10 will change the mannequin measurement. Mannequin sizes are mentioned earlier within the article.

supply=/content material/instance.jpg # Add Picture on to Colab on the left handside, or mount the drive and duplicate picture path

Within the subsequent code block, we are able to present the end result prediction utilizing the Python show library, the “filename” variable signifies the place the end result pictures are saved (discover that we use save=True within the CLI command).

Inference-Python SDK

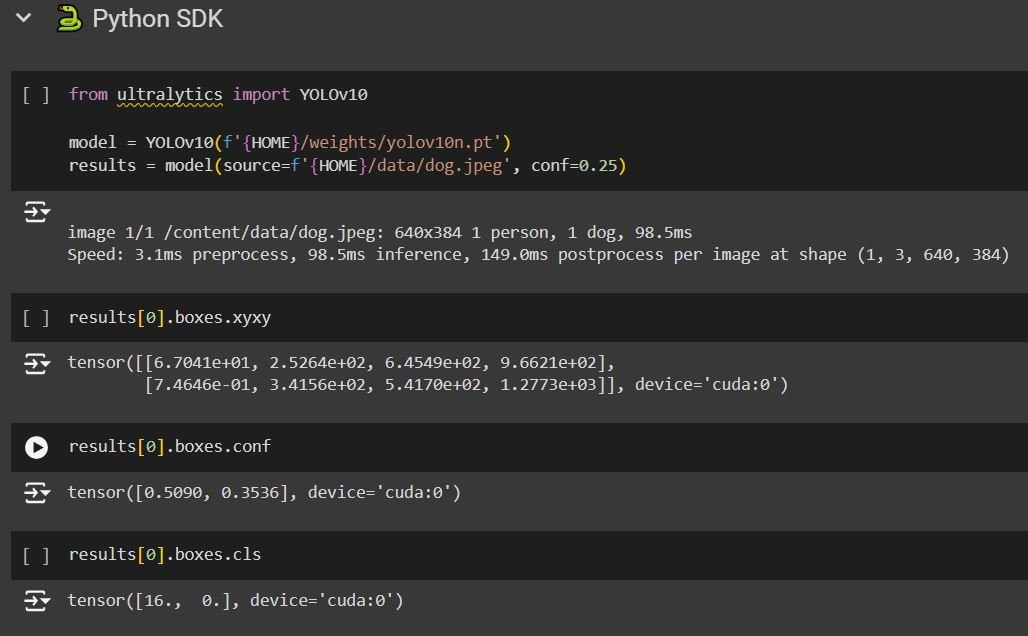

The code block after that reveals the utilization of YOLOv10 utilizing the Python SDK:

The SDK inference supplies us with extra data concerning the prediction. We are able to see the coordinates of the packing containers, the boldness, and lastly “packing containers.cls” representing the variety of the class (class) detected.

This code can also be adjustable, so you should utilize the mannequin measurement and the picture you need. The subsequent code block reveals how we are able to show the prediction utilizing the “supervision” library, which will even present data just like the postprocessing and preprocessing velocity, the inference velocity, and the class names.

With this, we have now concluded the utilization of YOLOv10 by way of code and HuggingFace, the pocket book supplied within the official YOLOv10 GitHub is sort of helpful and the tutorial inside will information you thru the method. Nevertheless, coaching the YOLOv10 requires additional effort to create your individual dataset, and iterate with the coaching course of.

Now let’s have a look at methods we are able to use these enhancements of the YOLOv10 in real-world purposes.

Actual-World Functions For YOLOv10:

YOLOv10’s effectivity, accuracy, and light-weight make it appropriate for a wide range of purposes, maybe changing earlier YOLO fashions in most real-time detection purposes. These new capabilities are pushing the boundaries of what’s attainable in pc imaginative and prescient.

- Object Monitoring: The latency enchancment in YOLOv10 makes it very appropriate to be used circumstances that want object-tracking in video streams. Functions vary from sports activities analytics (monitoring gamers and ball motion) to safety surveillance (figuring out suspicious conduct).

- Autonomous Driving: Object detection is the core of self-driving automobiles. The flexibility of an object detection mannequin to detect and classify objects on the highway is crucial for this use case. YOLOv10’s velocity and accuracy make it a chief candidate for real-time notion methods in autonomous autos.

- Robotic Navigation: Robots outfitted with YOLOv10 can navigate advanced environments by precisely recognizing objects and obstacles of their paths. This allows purposes in manufacturing, warehouses, and even family chores

- Agriculture: Object detection will be essential for crop monitoring (figuring out pests, ailments, or ripe produce) and automatic harvesting. YOLOv10’s accuracy and light-weight make it well-suited for these purposes.

Whereas these are just a few purposes, the probabilities are infinite for YOLOv10. A brand new age of real-time object detection is coming, and YOLOv10 may be the beginning.

What’s Subsequent For YOLOv10?

YOLOv10 is a big leap ahead within the evolution of real-time object detection. Its progressive structure, intelligent optimization, and noteworthy efficiency make it a beneficial instrument for a wide range of purposes.

However what does the longer term maintain for YOLOv10, and the broader subject of real-time object detection? One factor is evident: innovation doesn’t cease right here. Count on to see much more refined architectures, streamlined coaching processes, and a wider vary of purposes for this versatile expertise.

YOLOv10 is a big milestone, nevertheless it’s only one step within the ongoing evolution of object detection. We’re excited to see the place this expertise takes us subsequent!

If you wish to know extra concerning the older fashions and the way Yolov10 is completely different from them, learn our articles under: